Word Tokens

1 data data

2 statistics101 statistics | 101

3 internationalization international | ization

4 running run | ning

5 better better19 Working with Large Language Models (LLMs) and Agentic Systems

LLMs, Prompt Engineering, Tokenization, Agentic Systems, Context Engineering, LLM Agents, Ollama, OpenAI SDK, Claude Code, Positron Assistant

19.1 Introduction

19.1.1 Learning Outcomes

By the end of this section, you should be able to:

19.1.2 Learning Outcomes

By the end of this chapter, you should be able to:

Prompts and Language Models

- Describe how large language models generate responses, including the role of tokenization, token limits, and how model size affects output quality and consistency

- Develop effective prompts for interactive chat, distinguishing between one-off queries and prompts designed for reuse in code

- Convert a well-designed chat prompt into a reusable, parameterized function suitable for embedding in a larger analytical system

- Design prompts that provide sufficient context for agentic systems, including role, task, constraints, and examples

Workflows and Agents with Local Models

- Explain the difference between a prompt, a workflow, and an agentic system, and identify which is appropriate for a given task

- Call a local language model from R using Ollama and treat it as a function inside a larger program

- Build a generate -> evaluate -> revise loop and explain why evaluation is a prerequisite for reliable agentic behavior

- Define deterministic and prompt-based tools and register them in a tool registry that the model can select from at runtime

- Construct a balanced agent with a layered architecture: core model interface, shared helper functions, generate/evaluate tool pairs, and an orchestration loop

- Apply context engineering principles to multi-call systems; deciding what information each model call needs and what to leave out

- Extend a balanced agent with persistent memory and a pipeline wrapper to handle multi-step tasks that depend on intermediate results

- Debug failures in prompts, code execution, and tool selection using structured debug logs

Working with Cloud APIs and Agent Frameworks

- Swap a local Ollama model for a cloud-hosted model (e.g., Groq) by changing a single configuration file, demonstrating that the balanced agent architecture is model-agnostic

- Identify the tradeoffs between building your own agent with a cloud API, using an agent SDK or framework, and directing an opinionated agent system

- Build and run a Python agent using the OpenAI Agents SDK, including defining tools with the

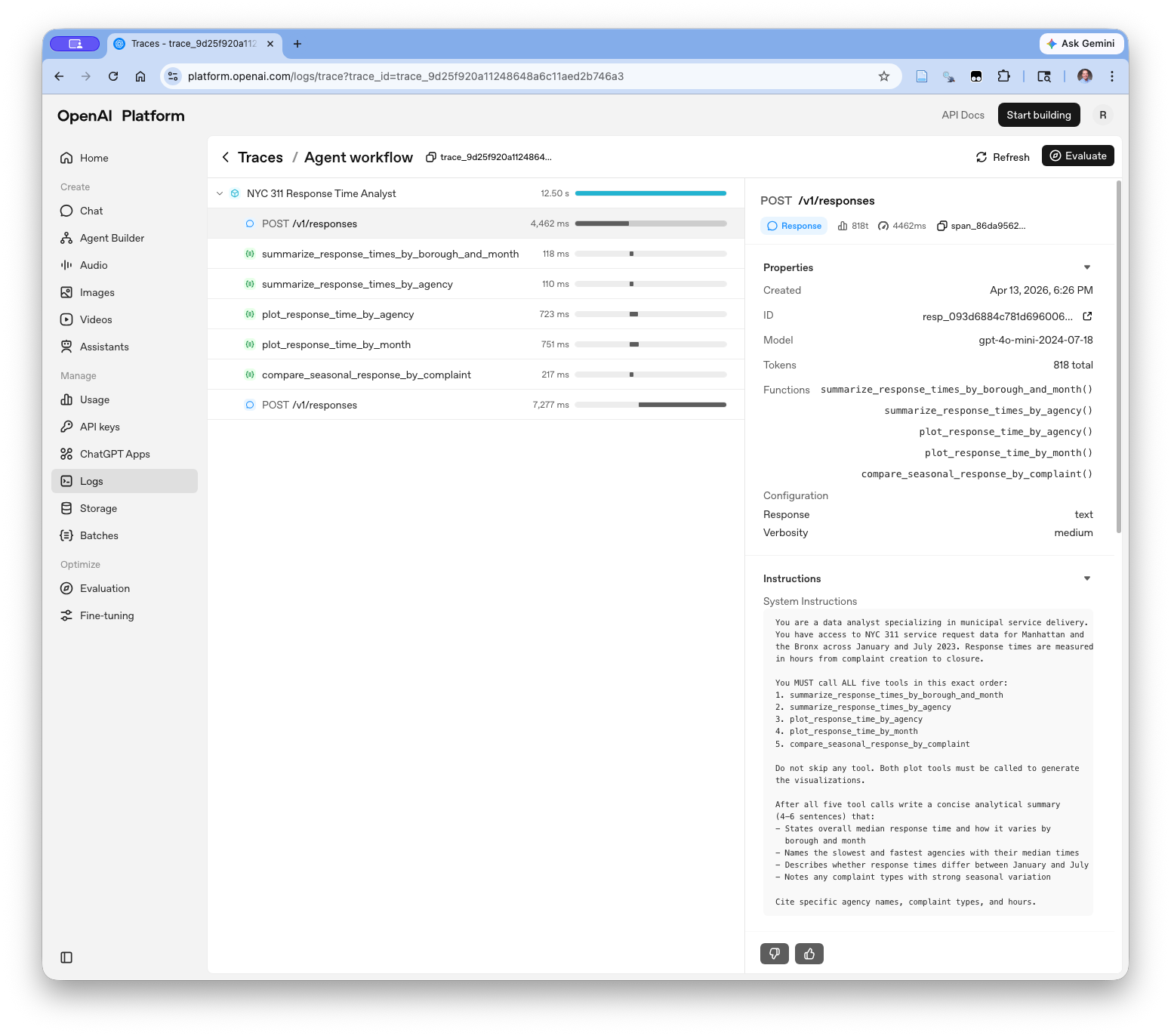

@function_tooldecorator, writing effective tool docstrings, and running the agent loop withRunner.run_sync() - Interpret OpenAI platform traces to understand what the model received, which tools it called, and what each step cost in tokens and time

- Fetch and cache real-world government data from the NYC Open Data Socrata API and apply it across multiple agent approaches

Opinionated Agentic Systems

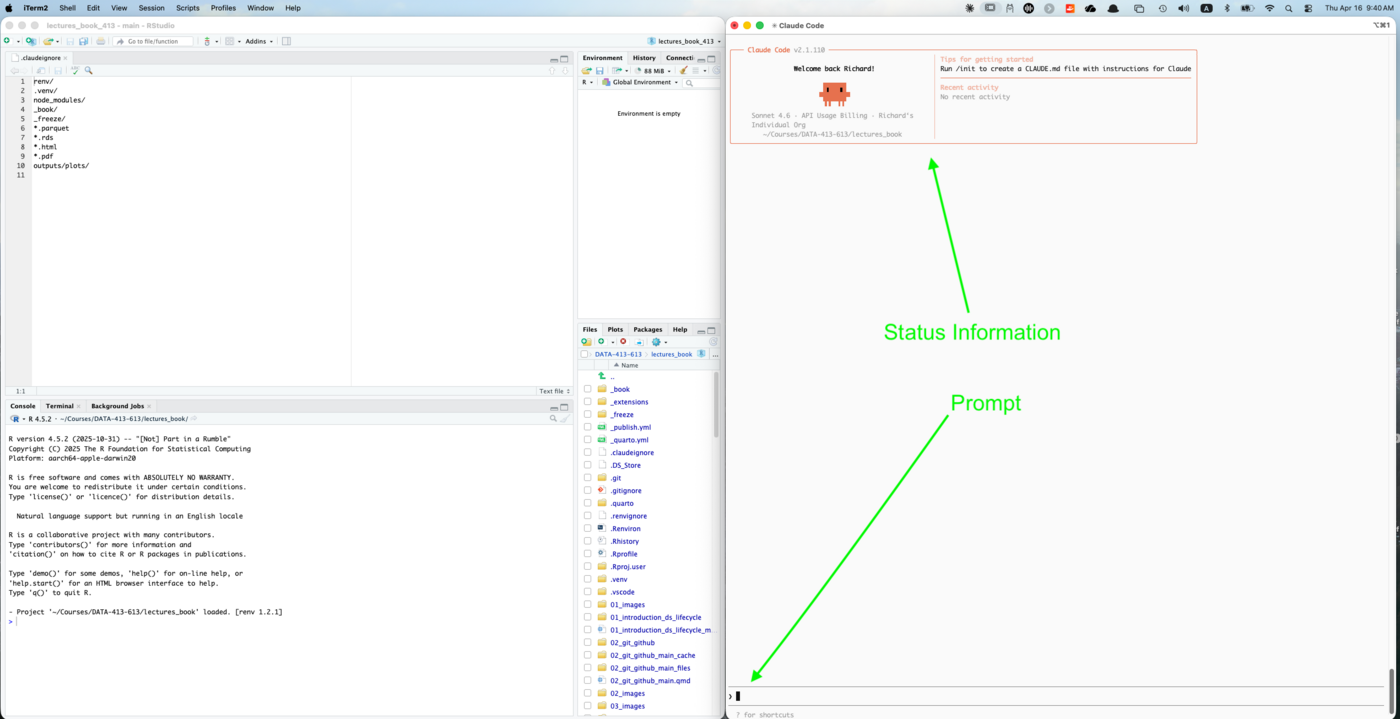

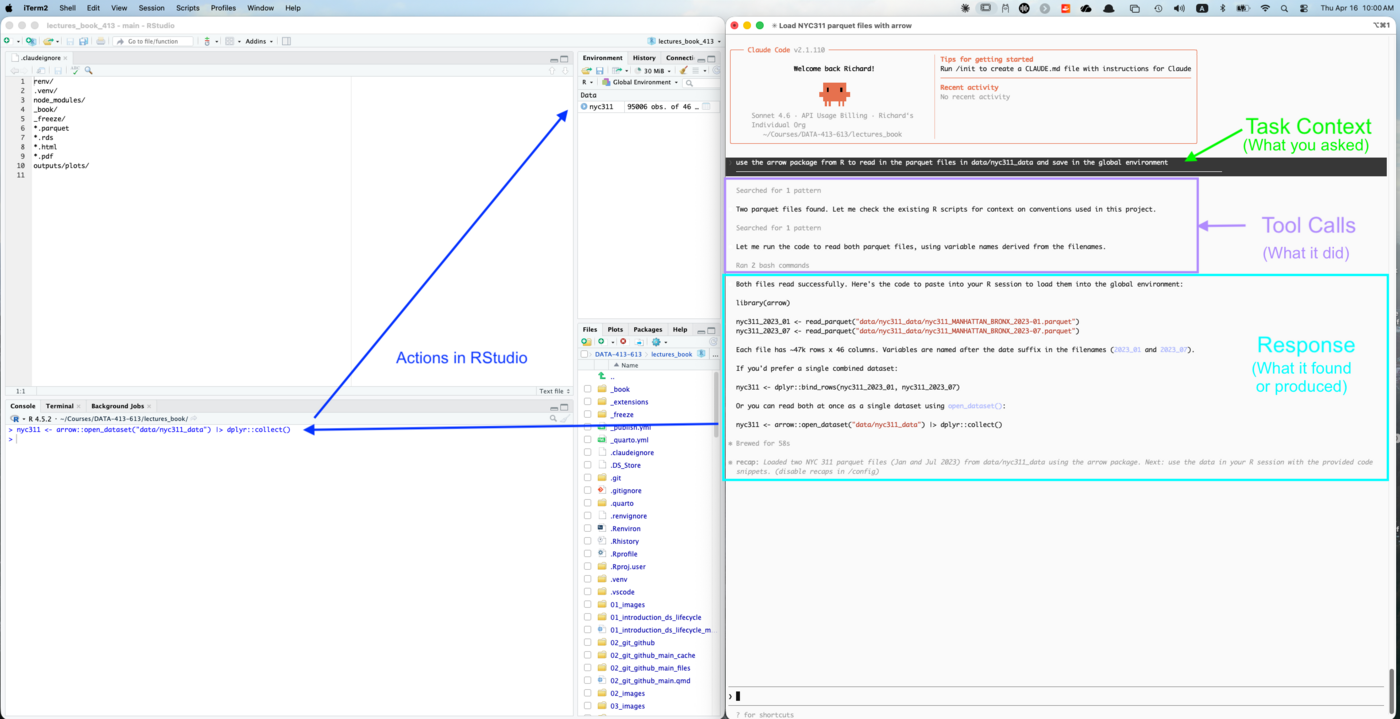

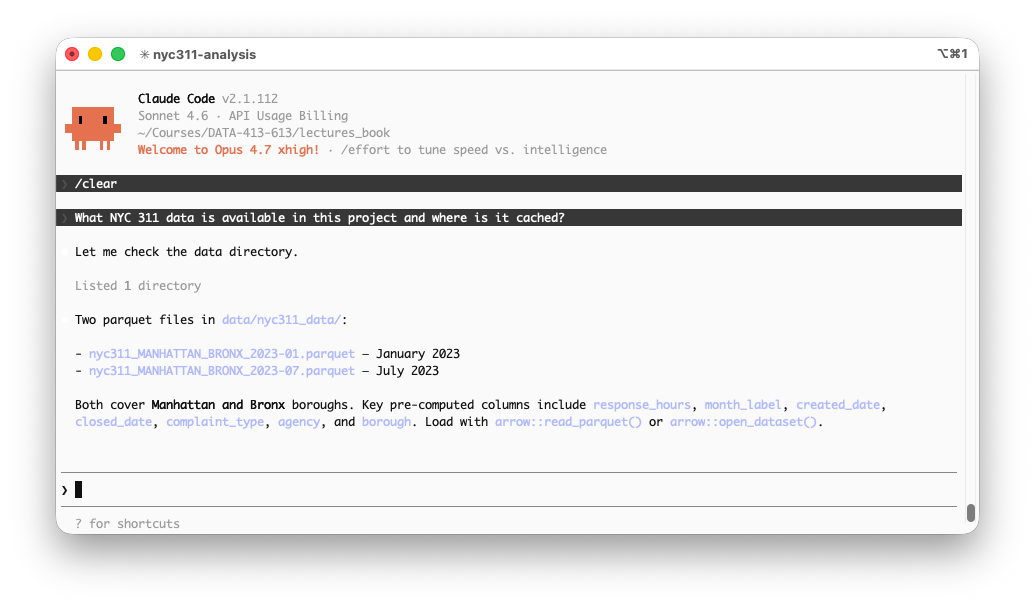

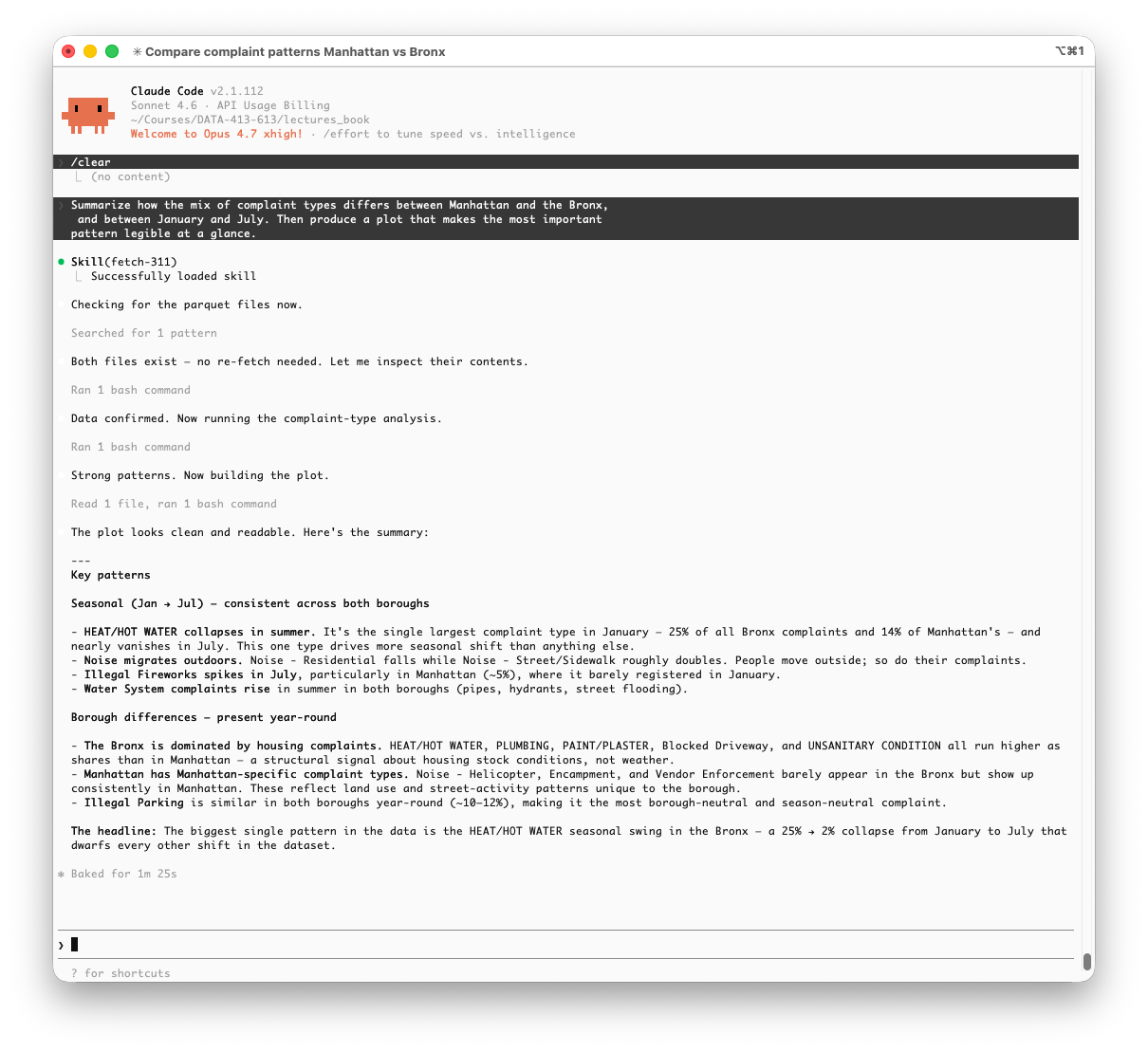

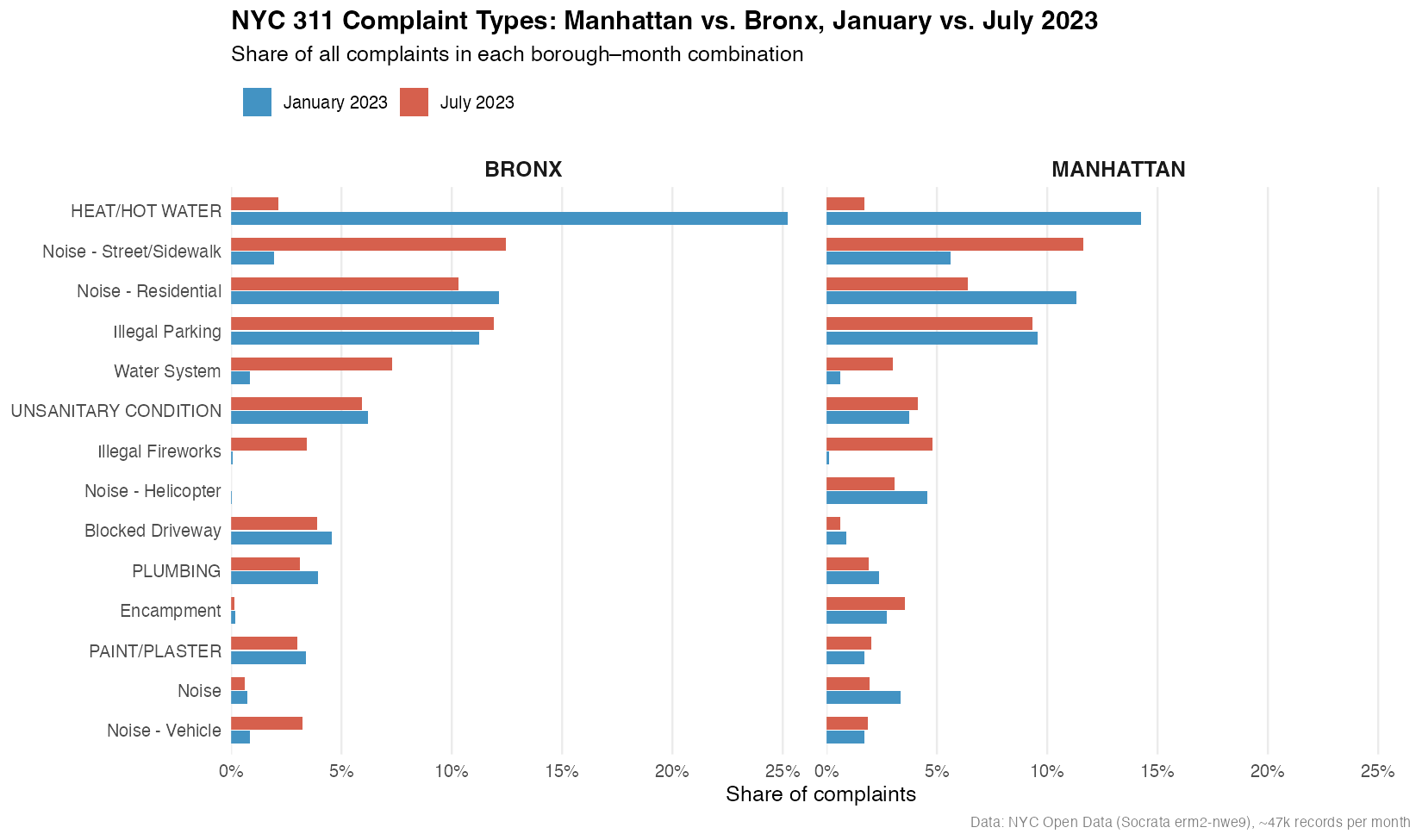

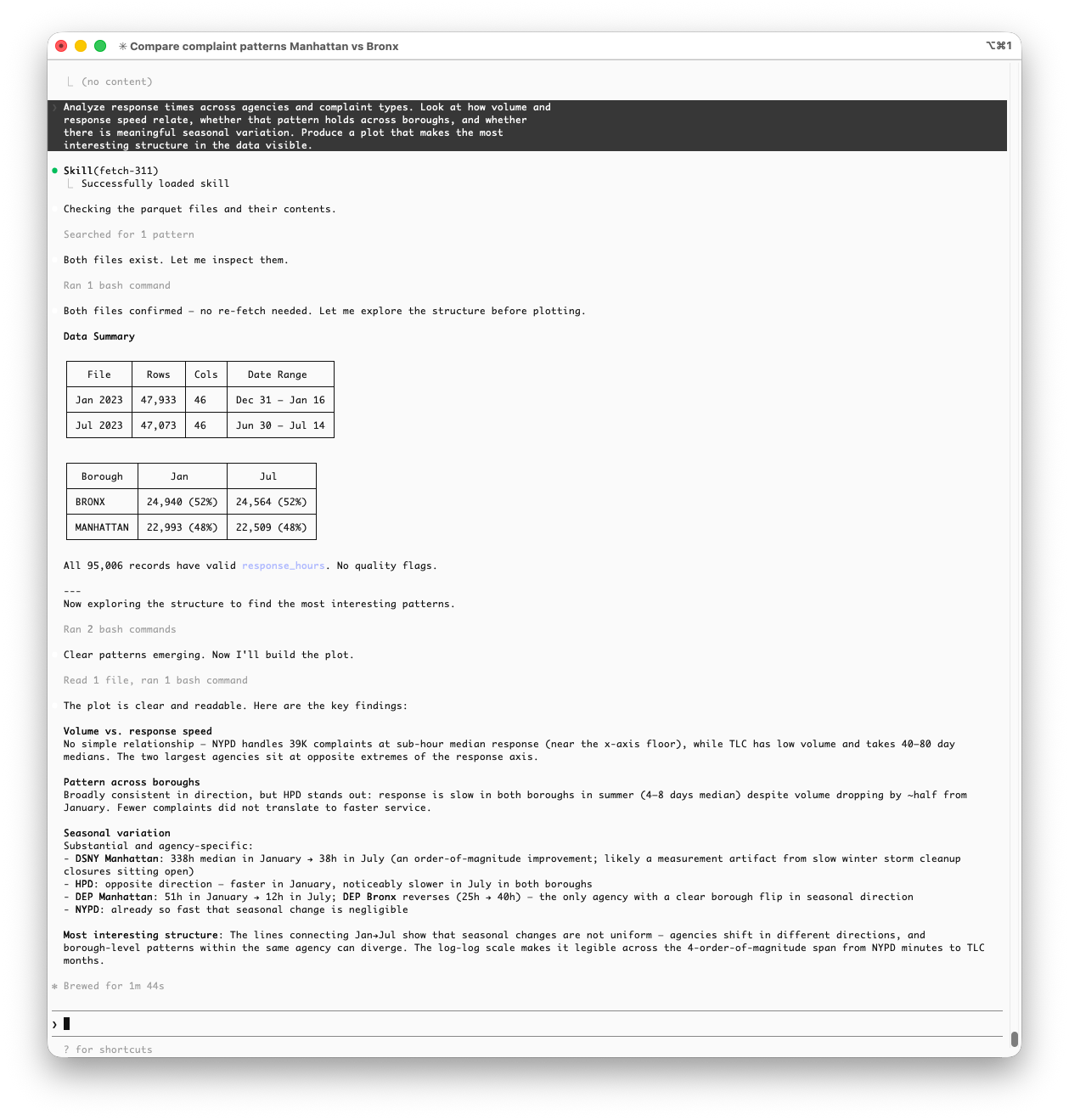

- Configure and use Claude Code in the terminal, including setting up CLAUDE.md project context, defining skills and subagents, and managing the Git workflow from within a session

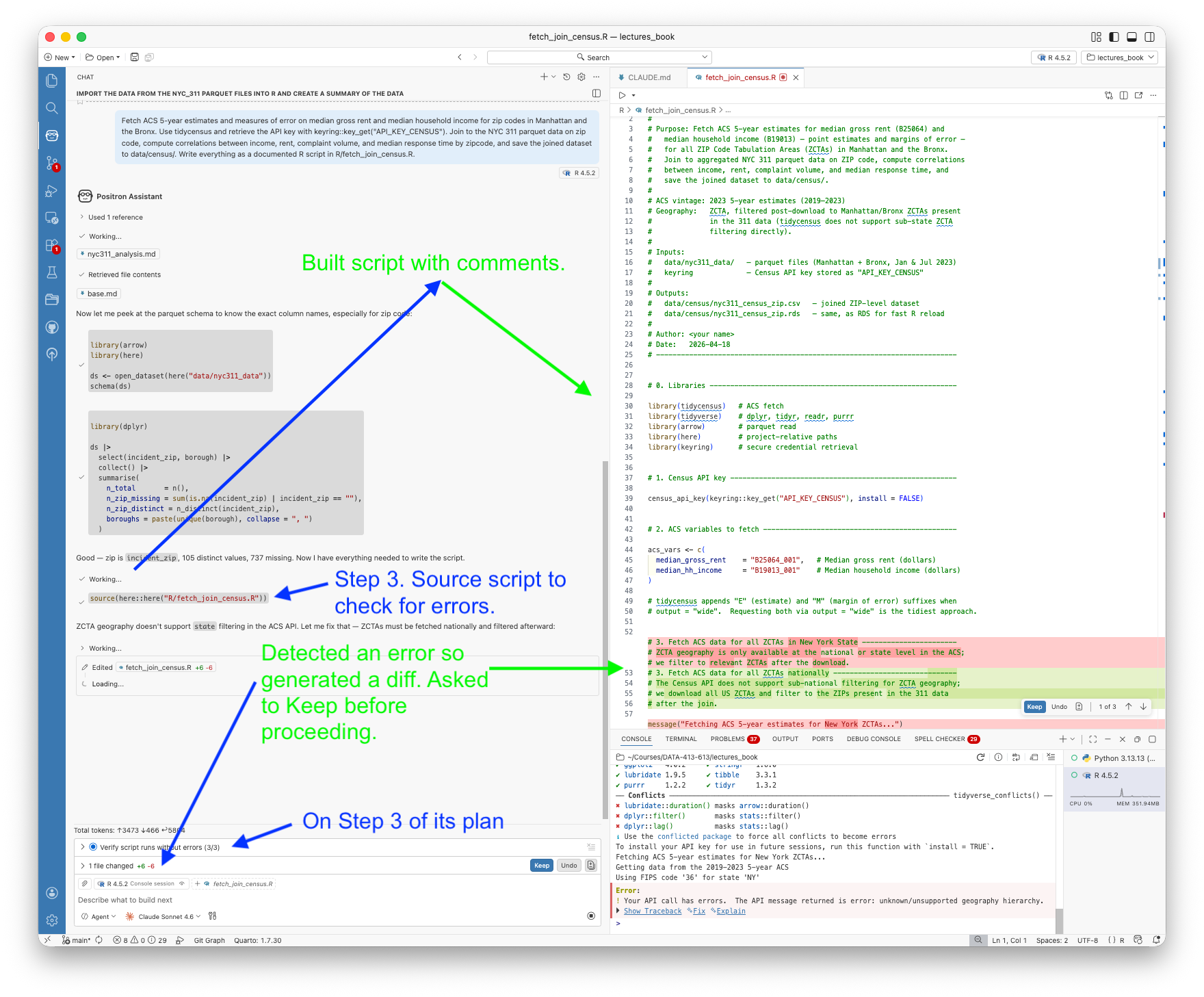

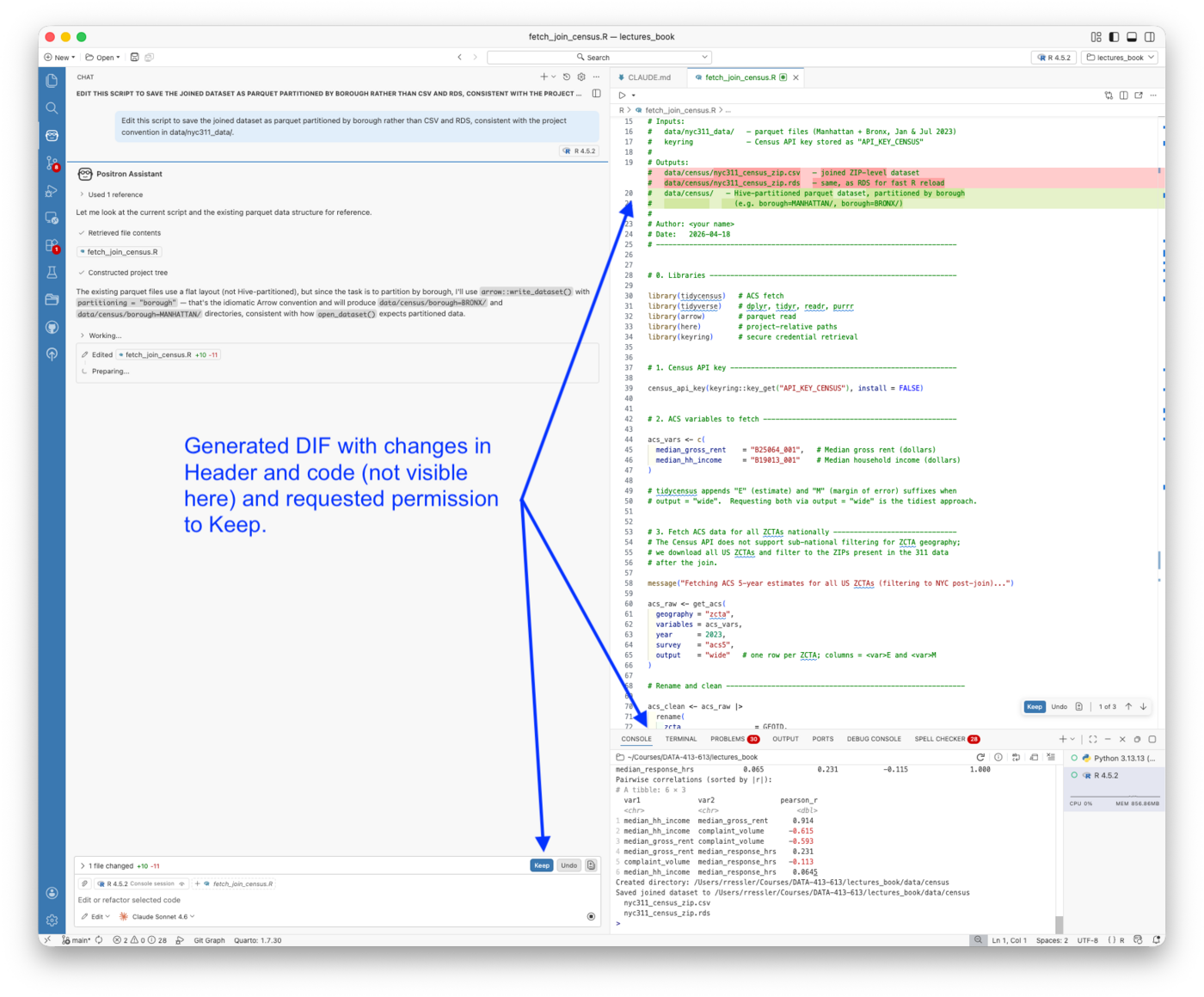

- Explain the permission model for Claude Code, the distinction between Allow (code execution) and Keep (file modification), and apply it deliberately

- Compose a team of specialized subagents (e.g., viz-specialist, data-scientist) and explain the token-economy benefits of delegating to isolated context windows

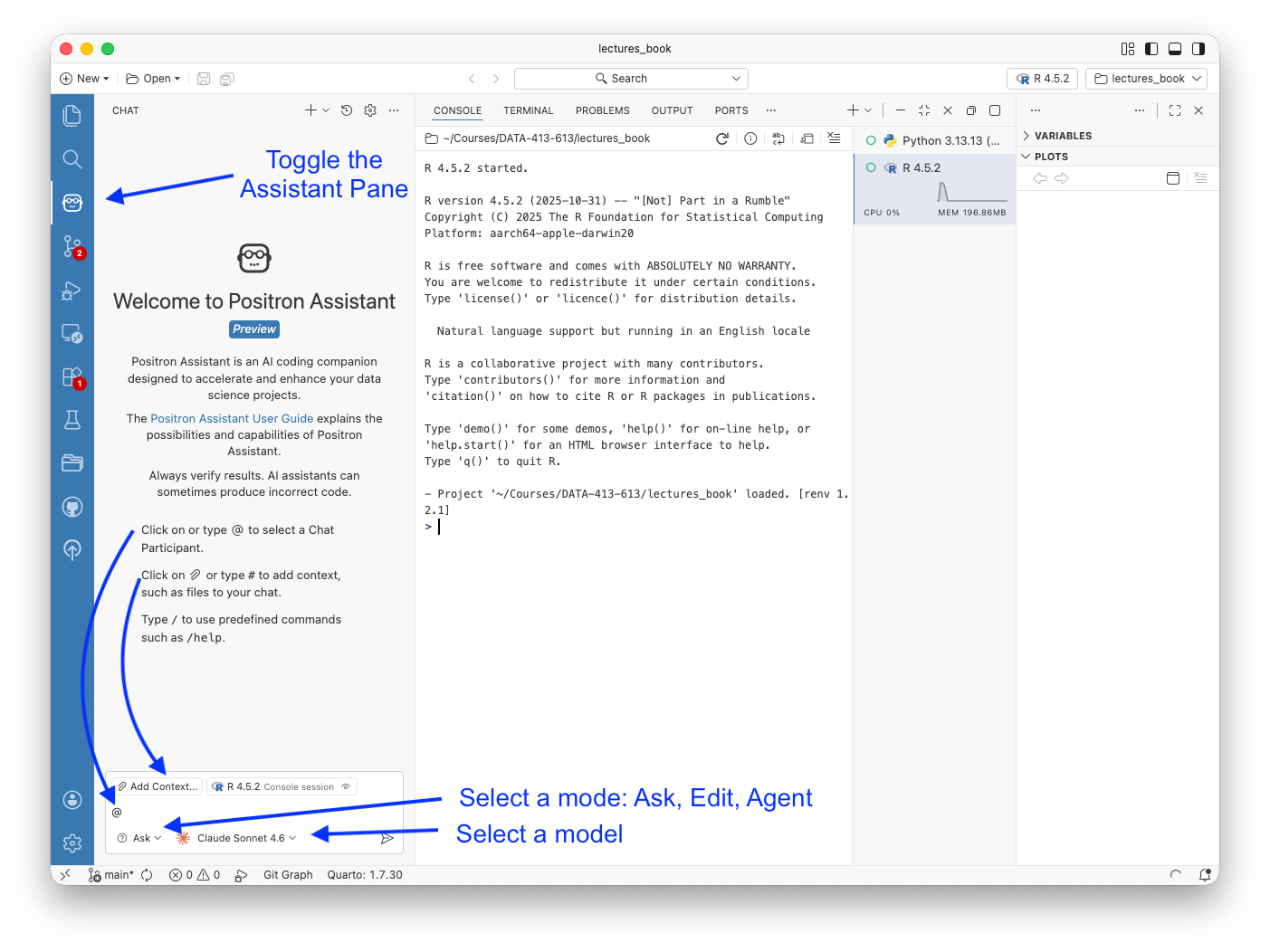

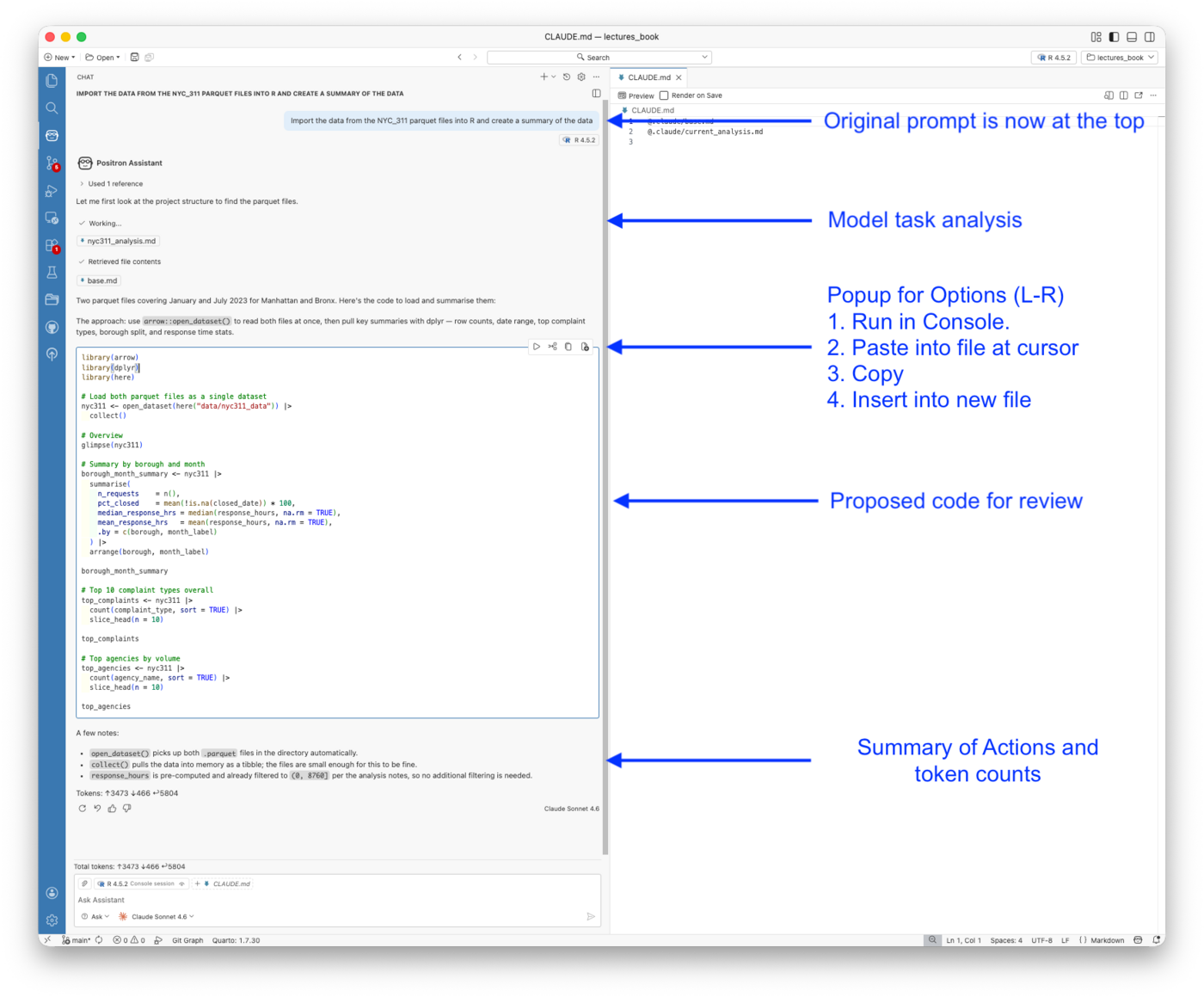

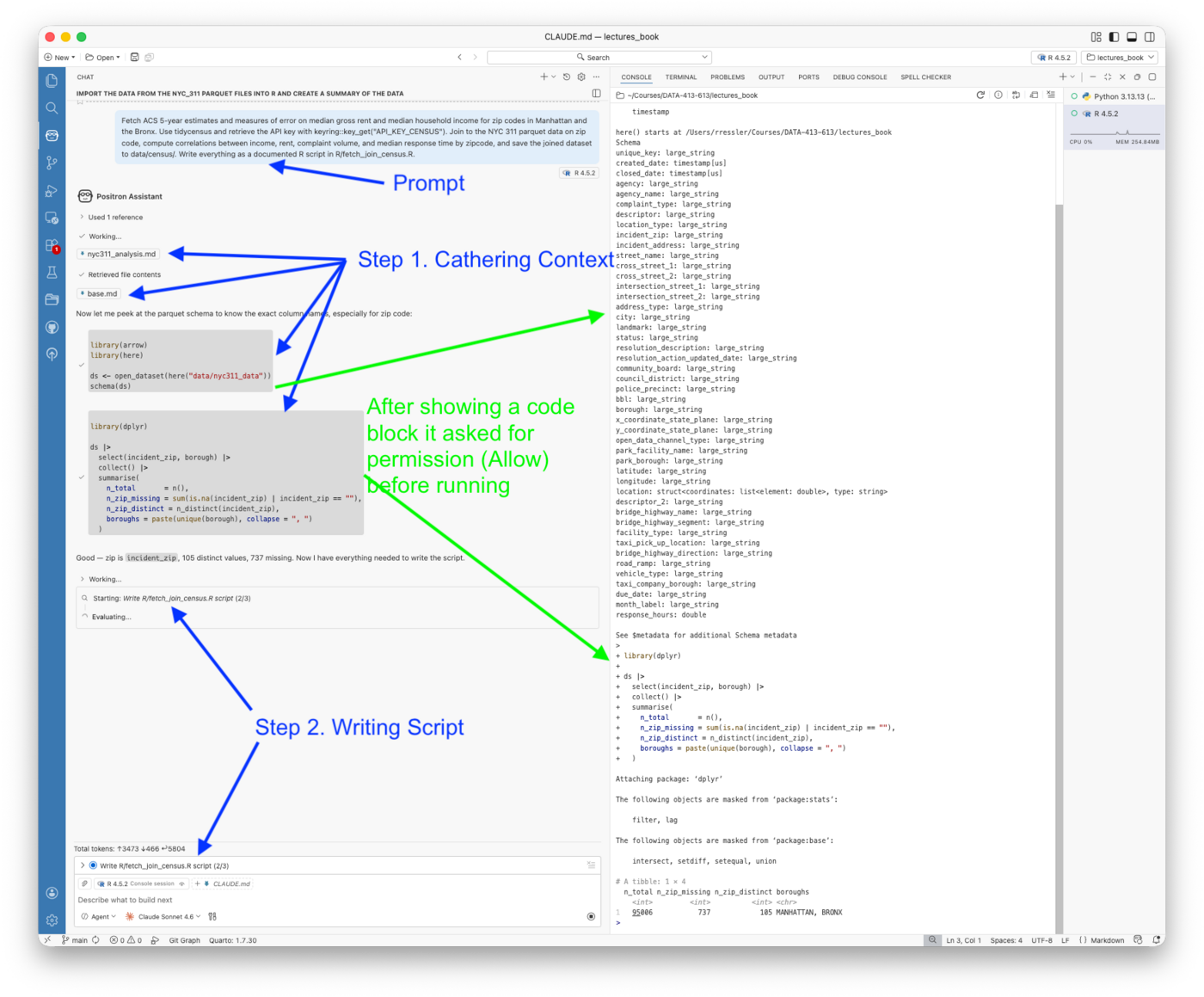

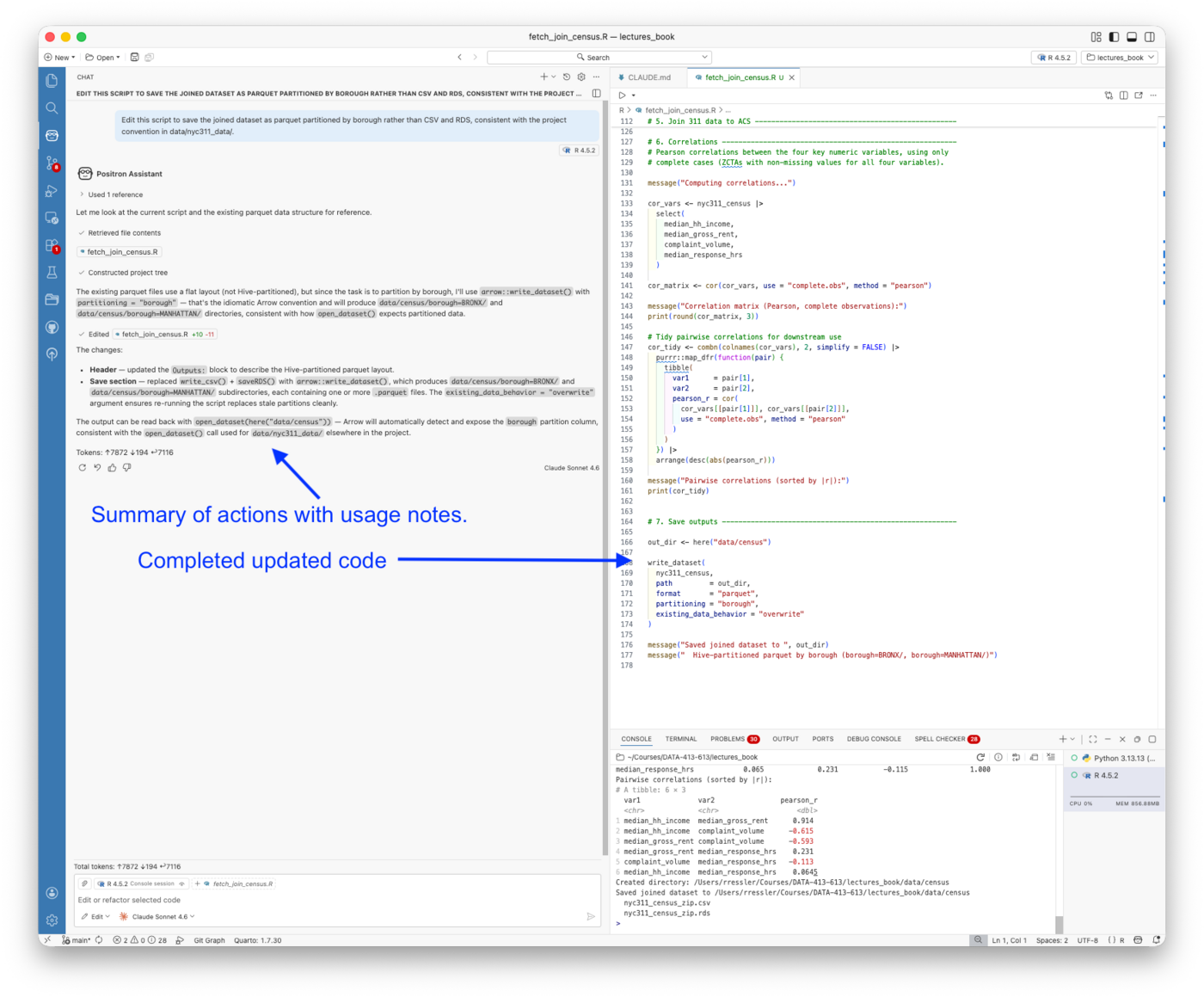

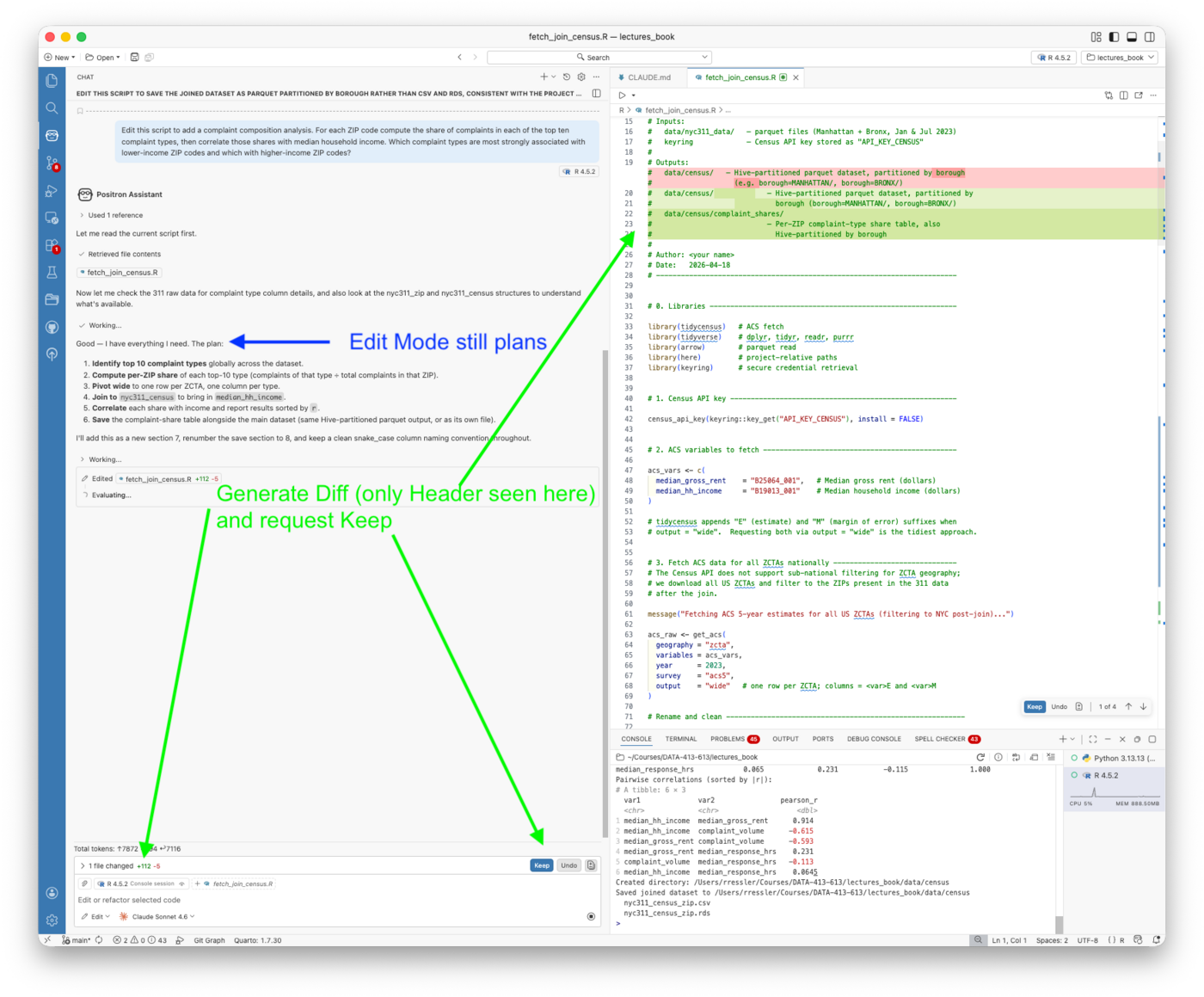

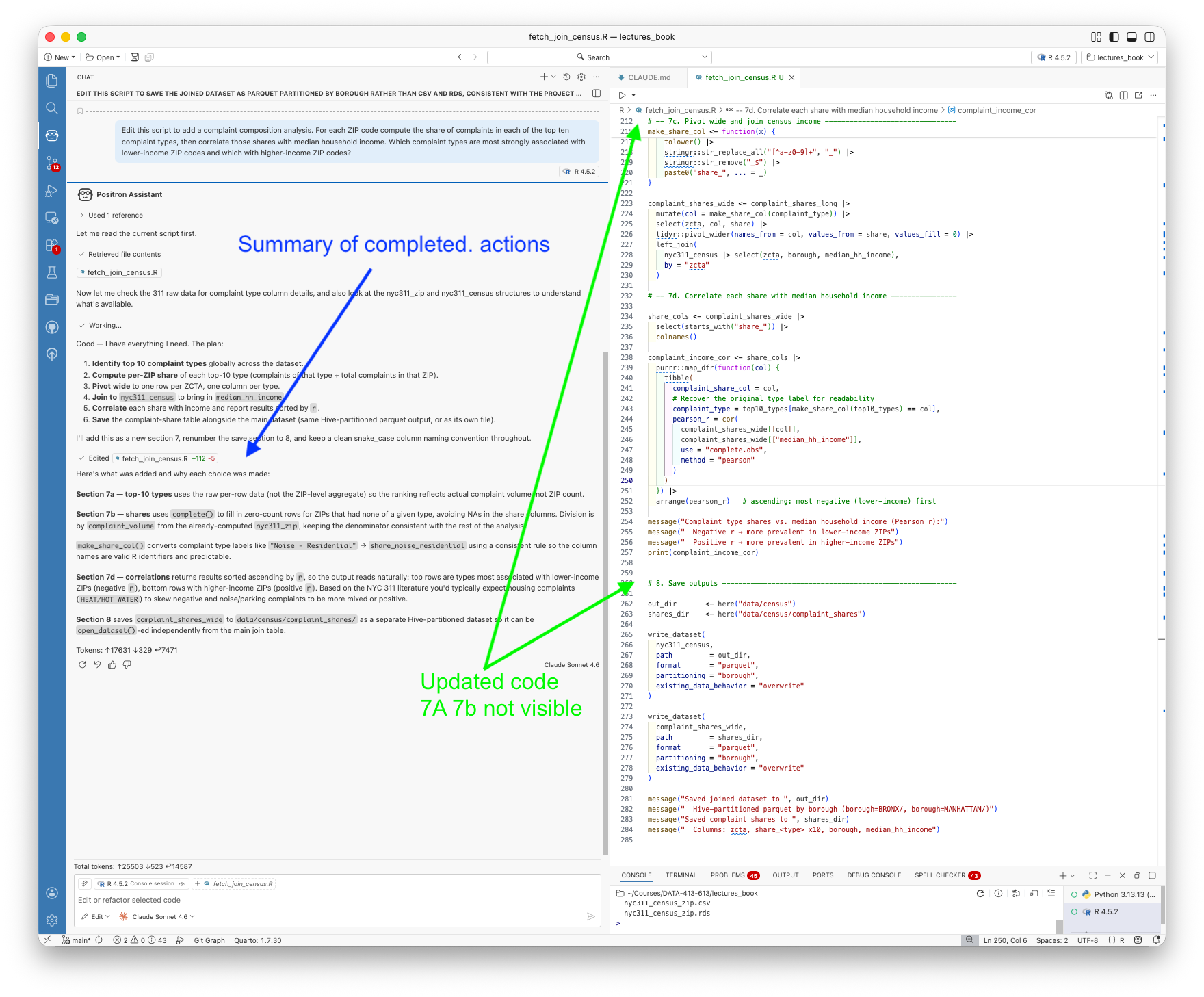

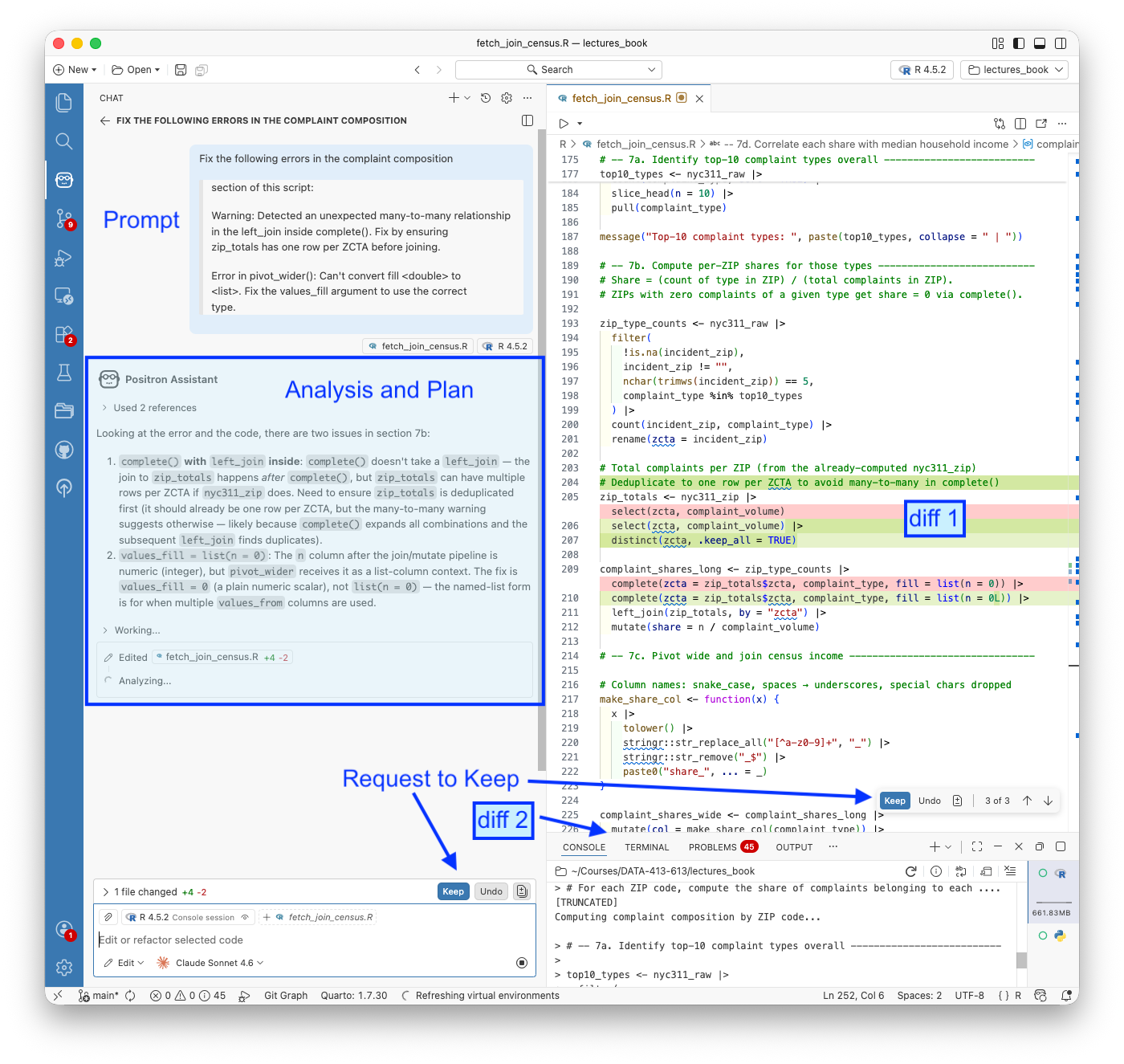

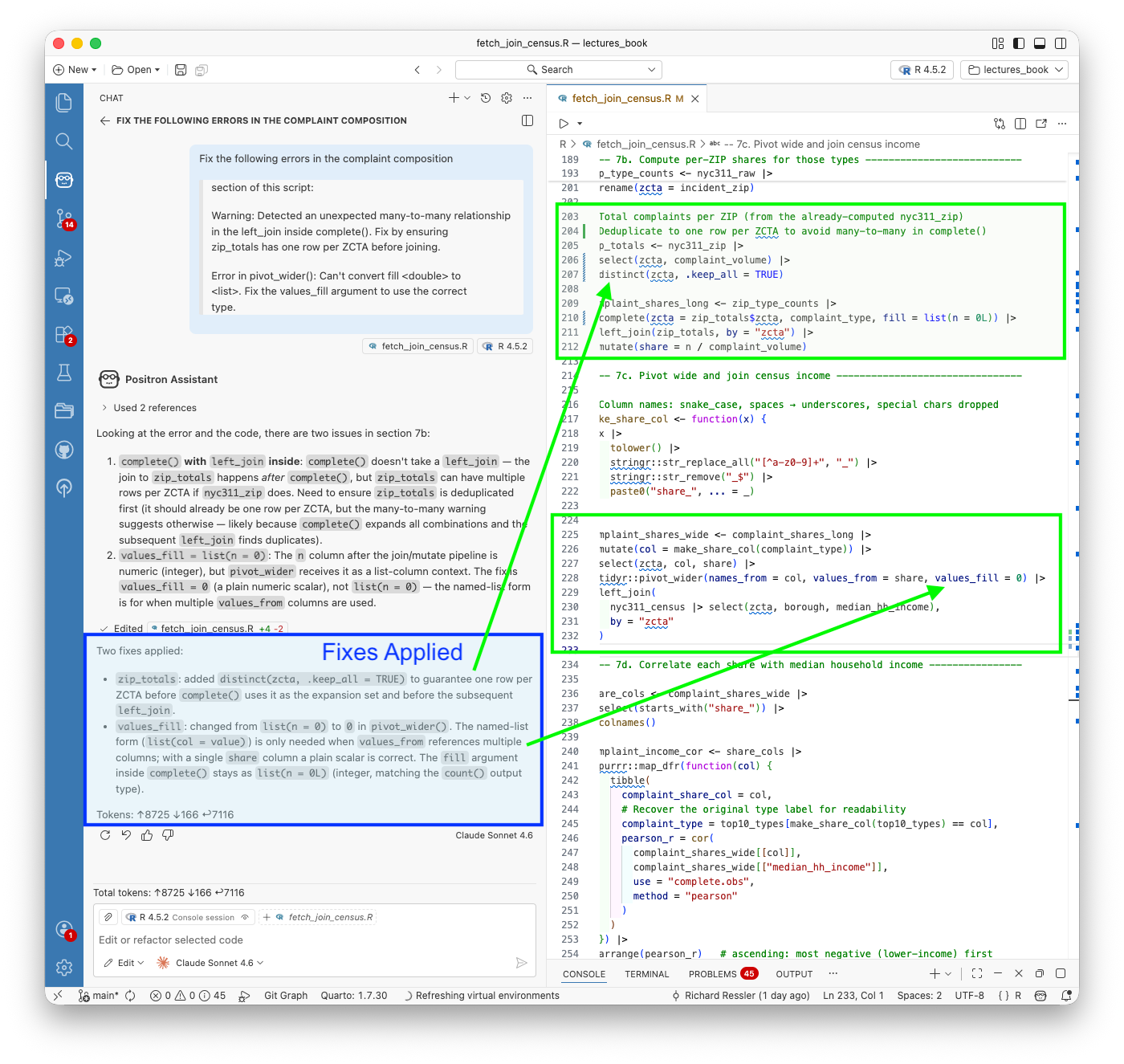

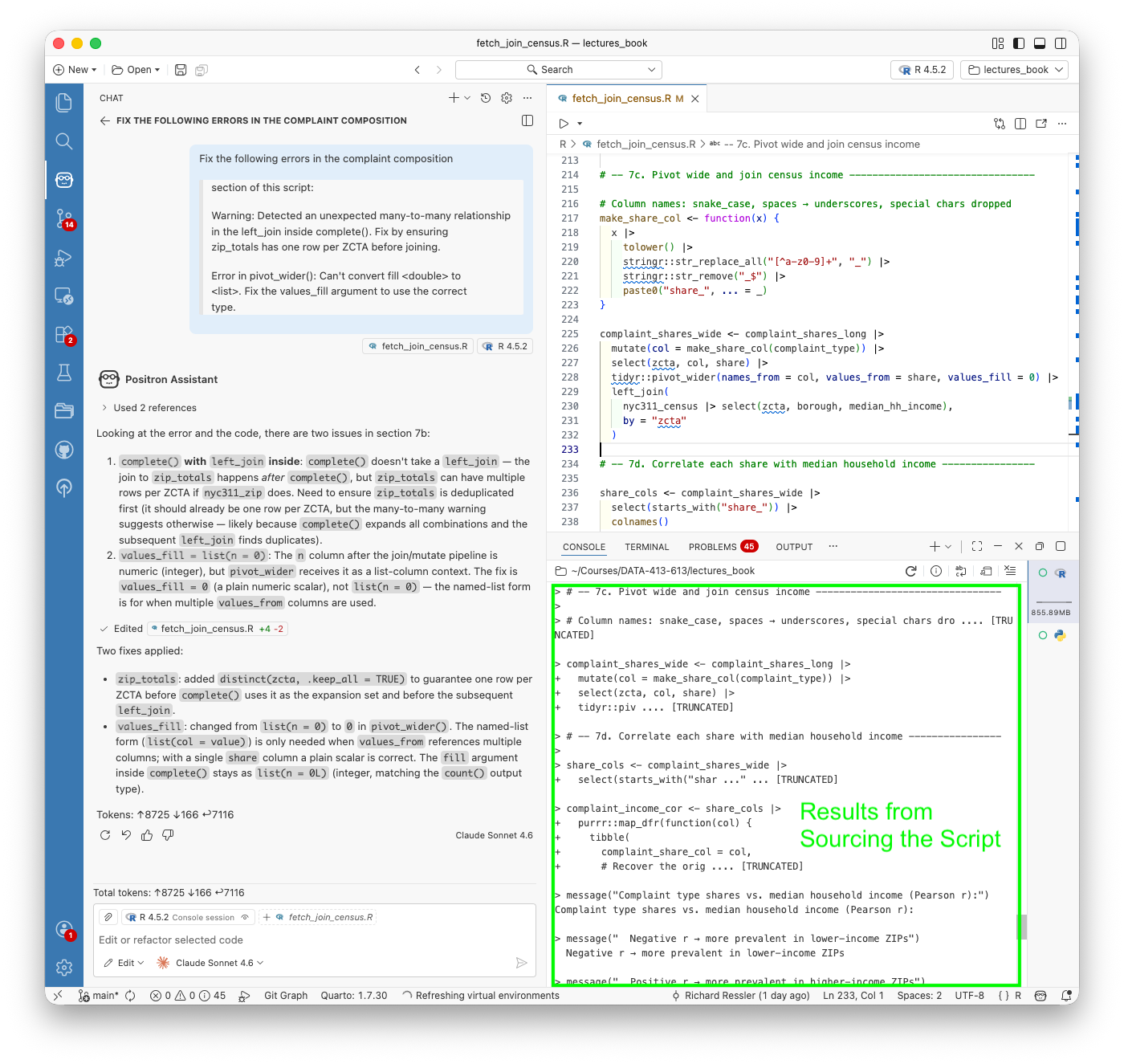

- Configure Positron Assistant with a Console API key, connect GitHub Copilot for inline completions, and use Ask, Edit, and Agent modes for different kinds of analytical tasks

- Explain what Positron Assistant knows about your session that a terminal-based agent does not, and use that session awareness to ask more precise analytical questions

- Apply responsible use principles specific to agentic systems: minimum necessary scope, human checkpoints before irreversible actions, critical evaluation of agent output, and transparency about AI assistance in submitted work

19.1.3 References

- OpenAI’s Prompt Engineering (OpenAI 2025)

- Prompt Engineering Guidebook (DAIR-AI 2025)

- Prompt Engineering Overview (Anthropic Engineering n.d.a)

- Effective context engineering for AI agents (Anthropic Engineering 2025)

- OLlama (“Ollama’s Documentation” 2026)

- Building Effective AI Agents (Anthropic 2024)

- A practical guide to building agents [aopenaiPracticalGuideBuilding2026]

- Socrata Open Data Network and API (“Socrata Developers” n.d.)

- Groq API Reference (groq n.d.)

- OpenAI Agents SDK Documentation (OpenAI Developers n.d.)

- Claude Code Documentation (Anthropic n.d.b)

- VoltAgent Awesome Claude Code Subagents (“VoltAgent/Awesome-Claude-Code-Subagents” 2026)

- Positron (Posit 2025)

- Positron Assistant (Positron n.d.)

R and Python Packages

- RSocrata - an R package for Socrata Open Data Portals (City of Chicago n.d.)

- {tidycensus} R Package (Walker and Herman 2023)

- {keyring} R Package (Csardi 2022)

- pyprojroot: Project-oriented workflow in Python (Chen 2026)

- {pdfplumber} python package for working with PDFs (Jeremey Singer-Vine n.d.)

- Definitive Guide to pdfplumber Text and Table Extraction Capabilities (Bispo 2024)

Other References for additional exploration.

- Both OpenAI and Anthropic have many online resources for supporting the use of their agentic systems as do other LLM providers.

- Best Practices for CLaude (Anthropic n.d.a)

- Prompting Best Practices (Anthropic Engineering n.d.b)

- Introduction to CLaude Skills (Alex Notov 2025)

- How to Build a Production-Ready Claude Code Skill (Takeda 2026)

- Claude Code: Create custom subagents (“Claude Code Create Custom Subagents” n.d.)

- Claude Code: Define your agent (“Claude Code Define Your Agent” n.d.)

- Google Agent Development Kit (ADK) (Google n.d.)

- {uv} Python Package Manager (Astral 2025)

- OWASP Least Privilege Principle (OWASP Foundation n.d.)

Academic literature on LLM agents and prompt engineering

- A Systematic Survey of Prompt Engineering in Large Language Models: Techniques and Applications (Sahoo et al. 2024) covers techniques from zero-shot and few-shot prompting through chain-of-thought.

- A Survey of Context Engineering for Large Language Models (Mei et al. 2025) introduces context engineering as a formal discipline distinct from prompt engineering, covering retrieval, memory, tool integration, and multi-agent systems across 1,400 papers.

- Agentic Large Language Models, a survey (Plaat et al. 2025) covers LLMs that reason, act, and interact, organized around exactly those three capabilities, with discussion of tool use, multi-agent systems, and responsible use risks.

- Large Language Model Agent: A Survey on Methodology, Applications and Challenges (Luo et al. 2025) covers agent architectures, collaboration mechanisms, and evaluation methodologies with a unified taxonomy.

- Awesome Agent Papers (Luo 2026) The companion repository to the survey above, maintained as a curated and continuously updated reading list organized by the same taxonomy. A good starting point for going deeper on any specific topic from this chapter.

This chapter was developed with the assistance of both ChatGPT and Claude.

- These tools were used throughout to explore ideas, generate initial code, and draft prose.

- Through extended iterative conversations the material was revised, restructured, removed, and adjusted as I tuned it to align with my pedagogical goals for this course and serve as a reference for others.

- All material was verified against primary references. The framing, sequencing, and editorial judgments are my own.

The contents of this chapter, their clarity, accuracy, and relevance, are my responsibility. Any errors are mine alone.

19.2 A Guide to Prompt Engineering

This section was written based on a conversation with an AI Assistant that started with a 156 word prompt and about 25 follow-up prompts to adjust, expand and refine content. Additional human editing improved clarity and consistency as well as formatting and adjustments to code and code chunk options. References were checked and adjusted for accuracy and citations were added. Links to inline references were added. Any errors are my responsibility.

19.2.1 Prompt Engineering

“Prompt engineering is the process of writing effective instructions for a model, such that it consistently generates content that meets your requirements.” OpenAI (2025)

A “prompt” can be a question, a request for code, a set of instructions, or even an ongoing conversation with a Large Language Model (LLM).

- Creating or “engineering” a prompt (or series of prompts) to produce the most accurate, useful, and relevant output possible to meet your goals is still a mix of art and science.

Prompt engineering builds on an understanding of how LLMs work (not how they are trained) to create prompts that are effective for your purposes. Characteristics of LLMs can shape effective prompts.

- Non-determinism: LLM responses vary because they are generated from probabilities in a high-dimensional space. Small wording changes can produce very different outputs.

- Asking what is the “most positive” versus the “least negative” sentiment of text.

- Context Sensitivity: LLMs don’t “understand” in the human sense; they generate responses based on patterns in data. How you ask a question strongly influences the quality of the answer.

- Specifying “Give me R code” versus “Explain in plain English” leads to very different outputs.

You can apply guidelines and best practices to improve your chances of getting useful results consistently while building your understanding and skills.

- Efficiency: A well-crafted prompt reduces the need for repeated clarifications.

- Accuracy: Clear context, guidance, and constraints can help minimize errors or hallucinations.

- Improved Collaboration: prompt engineering can be seen as refining or debugging your question prior to collaborating with others or the LLM.

- Multi-language skill: The same techniques apply whether you’re generating R code, Python code, documentation, or explanations.

In short: Prompt engineering is about learning how to “talk to the model” effectively so it can become a productive tool for your goals rather than a source of confusion.

19.2.2 How LLMs Respond to Prompts

LLMs (like ChatGPT, Claude, or Gemini) do not “understand” like humans. Instead, they:

- Predict the next token (word or subword) given your input and context.

- Use patterns from training data to approximate reasoning.

- Are sensitive to framing: wording, order, specificity, and constraints change the output.

- Can hallucinate: generate confident-sounding but false statements.

19.2.2.1 Tokenization

LLMs do not read raw text directly. Instead, they break text into tokens (smaller units such as words, subwords, or characters).

- Tokens are not always whole words.

- Example:

"data science"→["data", " science"](2 tokens)

- Example:

"statistics101"→["statistics", "101"](2 tokens)

- Example:

"internationalization"→["international", "ization"](2 tokens)

- Example:

- This is similar to but not the same as Lemmatization which reduces words to their base form (common in NLP preprocessing, not in LLM tokenization).

"running"→"run"

"better"→"good"

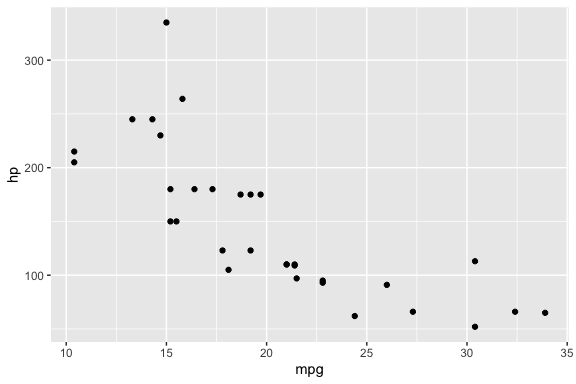

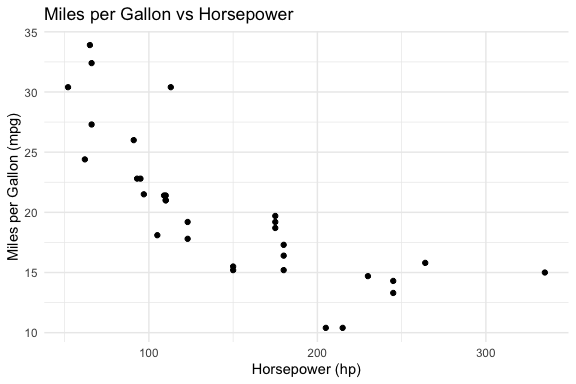

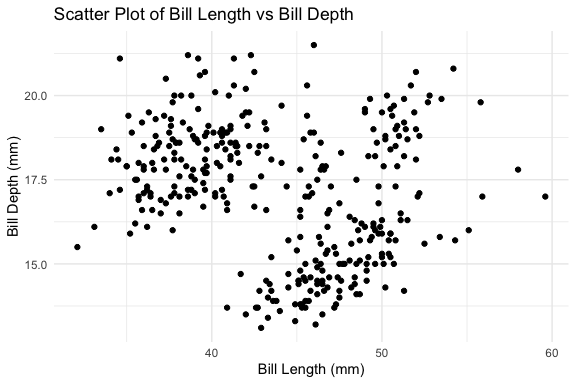

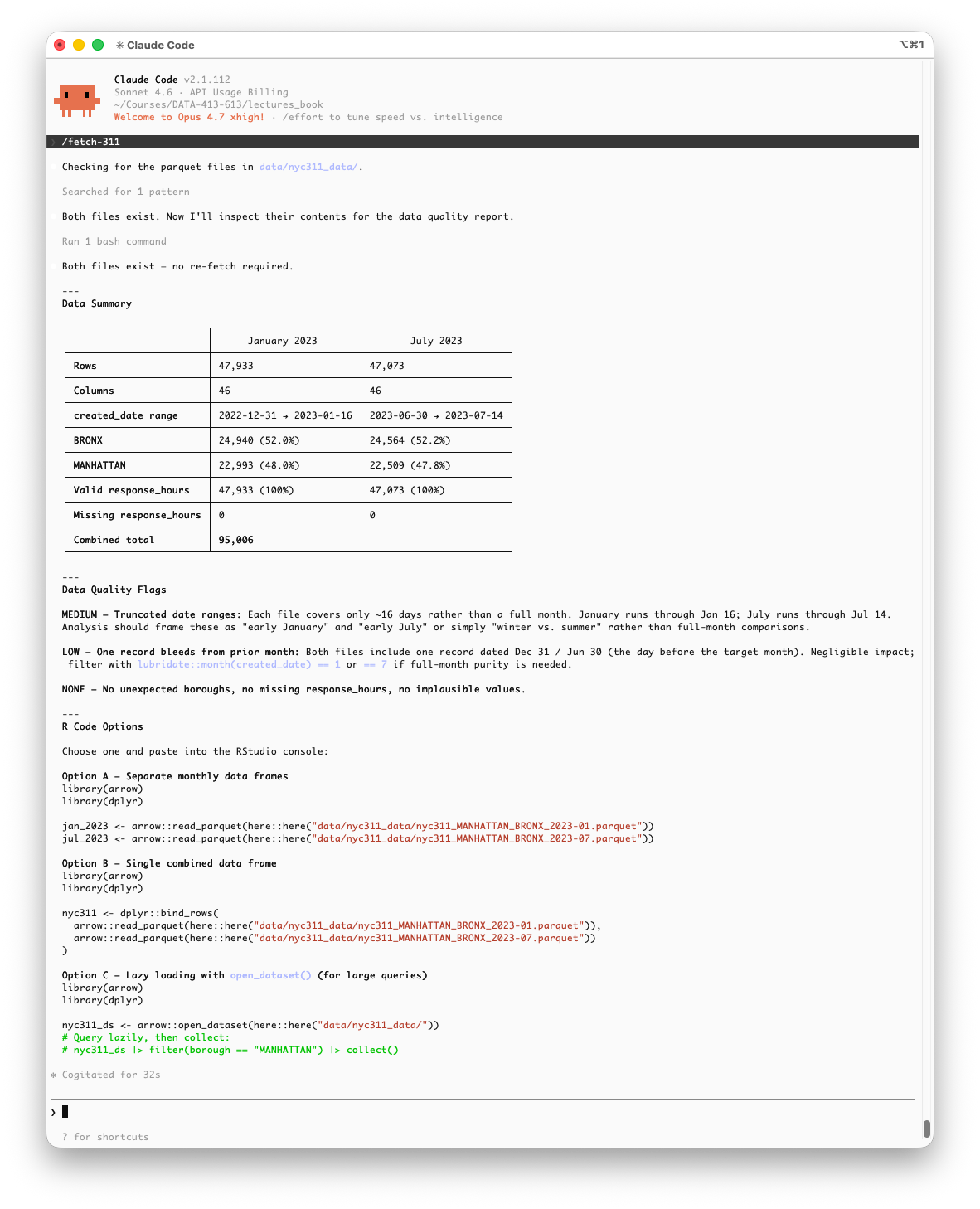

Here is a toy example of token splitting.

19.2.2.2 The Context Window and Token Limits and Conversation Context

The context window is the full set of tokens the model sees at any single moment — think of it as the model’s working memory for a task.

- It holds everything at once: your system instructions, the conversation history, your current message, and any retrieved documents or data.

- The model can only reason about what is currently in this window.

- Nothing outside it exists from the model’s perspective.

- Managing what goes into this window (what to include, what to summarize, what to leave out) becomes one of the most important skills as you move from interactive chat toward writing code that calls models programmatically.

Each model has a maximum token limit (prompt + response combined).

- Context windows have grown dramatically and continue to expand rapidly so always check the current documentation for the model you are using.

- Representative sizes as of early 2026:

- Small/local models (e.g., ollama 7B–13B): 8k–32k tokens

- Mid-range models (e.g., GPT-4o, Llama 3.1): 128k tokens

- Large frontier models (e.g., Claude Sonnet 4.6, Gemini 3 Pro, GPT-5.4): 200k–1M+ tokens

How “memory” actually works in interactive chat:

- The model itself is stateless, i.e.,it has no built-in memory between calls.

- Each API call is processed independently, with no knowledge of prior exchanges unless that history is explicitly included.

- The appearance of a continuous conversation is an illusion created by the application layer (Claude.ai, ChatGPT, etc.), which automatically prepends all prior messages to each new prompt before sending it to the model.

- As a conversation grows, so does the context it consumes.

- Long conversations with code in prompts and responses can fill the context window quickly and slow performance.

- If the limit is approached, older context may be truncated, leading to loss of information or inconsistent responses and eventually you will need to start a new conversation.

Bigger is not always better — context rot:

- Research consistently shows that model performance degrades as context length increases, even when the tokens technically fit in the window. This is sometimes called context rot.

- Models tend to attend more reliably to information near the beginning or end of the context, and may lose track of details buried in the middle.

- More tokens can mean more distraction, not more capability.

- A practical rule of thumb: effective reliable performance is typically lower than the advertised maximum.

Persistent memory is a different mechanism entirely:

- Some platforms (e.g., Claude Projects, ChatGPT’s memory feature, Gemini’s notebook integrations) now offer persistent memory which is the ability to retain information across separate conversations.

- This is not a property of the model itself. It is an application-layer feature: the platform stores summaries or preferences externally and injects them back into the context window at the start of each new session.

- The underlying model is still stateless; what changes is what the platform loads into context before you say a word.

- Systems such as Retrieval-Augmented Generation (RAG) and augmented LLMs Section 19.4.1, exhibit this pattern of storing information externally and retrieving it into context on demand.

- These are examples of persistent memory scaliung from a chat interface feature into a key element of agentic systems.

- Keep prompts focused. Paste only the relevant portion of a dataset or file, not the whole thing. Summarize or sample when the input is large.

- Start a new conversation for a new topic. This prevents unrelated context from accumulating and keeps the window clean.

- For local models with smaller windows (as you will use with ollama), this constraint is tighter so short, targeted prompts matter more.

- Place the most important information at the beginning of your prompt, not buried in the middle, where attention is weakest.

19.2.2.3 Tokens are Converted to Numbers

- LLMs do not operate on tokens as text. Each token is mapped to a numerical vector (an embedding).

- Embeddings are high-dimensional vector representations of tokens or text that capture semantic meaning.

- LLM embeddings are vectors with hundreds or thousands of dimensions, not just 2 or 3, and each dimension encodes some aspect of meaning, context, or syntactic/semantic feature.

- Different models can use different embedding schemes.

- The model performs mathematical operations on these vectors to predict the next token based on mathematical similarity or “closeness.”

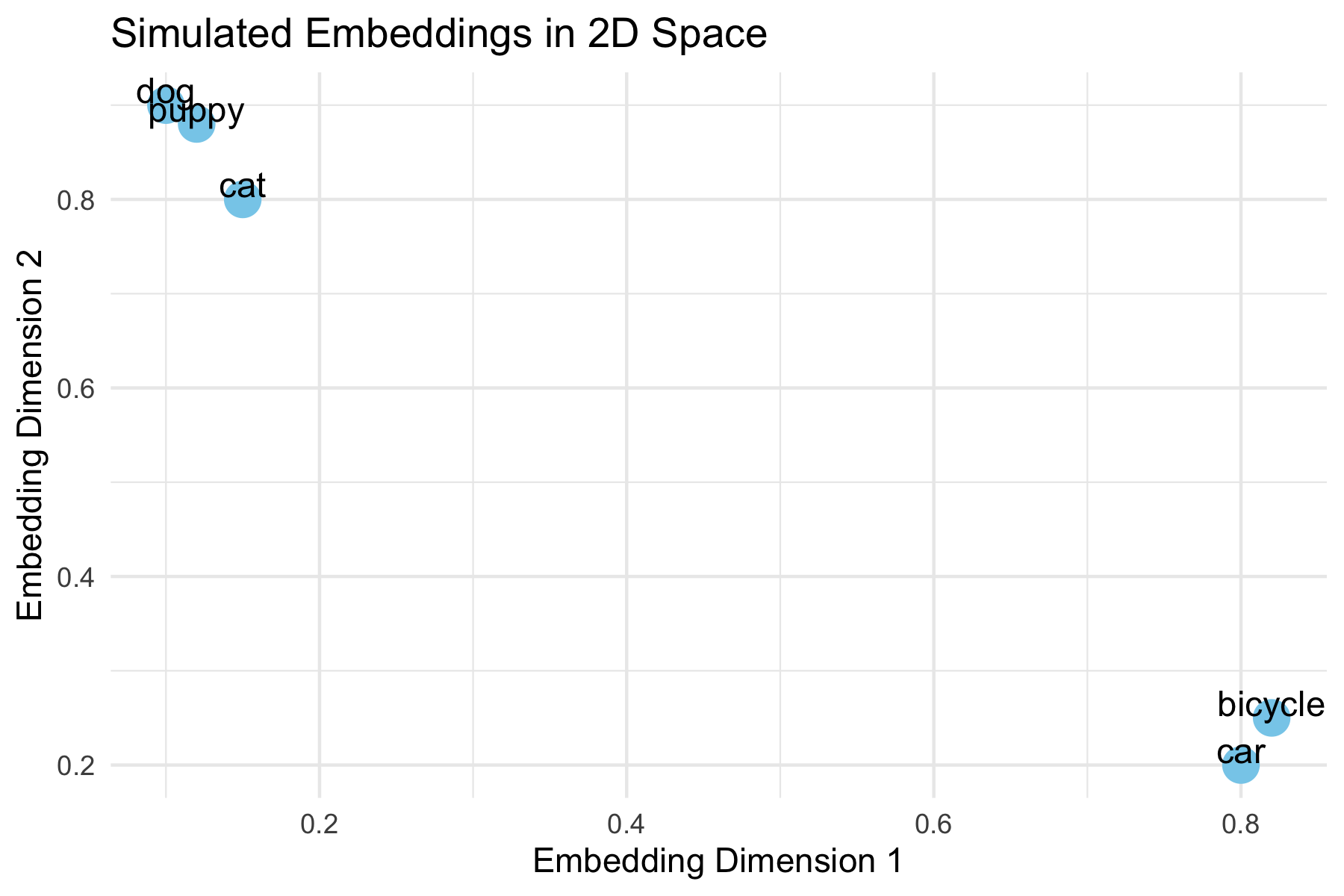

Example:

- "dog" → [0.12, -0.03, 0.88, ...]

- "puppy" → [0.14, -0.01, 0.91, ...]

- "car" → [0.80, 0.20, -0.05, ...]

19.2.3 Measuring Closeness

Similarity between the embedding vectors is measured using metrics like cosine similarity.

\[ \text{cosine similarity} = \frac{\vec{A} \cdot \vec{B}}{\|\vec{A}\| \|\vec{B}\|} \]

- Cosine similarity = 1 -> vectors point in the same direction (highly similar meaning).

- Cosine similarity = 0 ->vectors are orthogonal (unrelated meaning).

Embeddings allow LLMs to “understand” similar words or phrases as they have embeddings “close” together in vector space.

- These metrics are used for:

- Semantic search (finding relevant text)

- Retrieval-Augmented Generation (RAG)

- Clustering or similarity calculations

- Semantic search (finding relevant text)

19.2.4 Visualizing Embeddings in R

This example shows how words with similar meaning might cluster together in the embedding space.

- “dog”, “puppy”, and “cat” cluster closely, reflecting semantic similarity.

- “car” and “bicycle” are farther away, showing unrelated meaning.

- In real embeddings, vectors are high-dimensional, but this 2D example illustrates the concept.

- Cosine similarity measures closeness mathematically in high dimensions, even though they can’t be plotted.

Important nuance

- These similarity measures are inherently fuzzy.

- Minor variations in your prompt—such as negating a sentence, reordering words, or changing context—can result in large differences in the output, even if the overall meaning seems similar to a human reader.

Example:

- Prompt 1: "List three common R functions for plotting a histogram."

- Prompt 2: "List three uncommon R functions for plotting a histogram."

- Prompt 3: "List three popular R functions for plotting a histogram."

Even though only one word changes, the model may:

- Suggest completely different functions.

- Reorder examples differently.

- Include/exclude certain packages.

This happens because embeddings map text to points in a continuous space, and the model predicts outputs based on small differences in those positions.

Takeaway: Always experiment with multiple phrasings and verify results. Treat embeddings and similarity measures as guides, not exact truth.

19.2.5 Guidelines for Effective Prompts

Good prompts share a few common traits: they are clear, contextual, and iterative. Here are strategies to improve your interactions with AI tools:

- Be Specific

Clearly describe what you want the AI to do. Include the programming language, the type of output, and the level of detail you expect.

Example:- Weak: “Plot the data.”

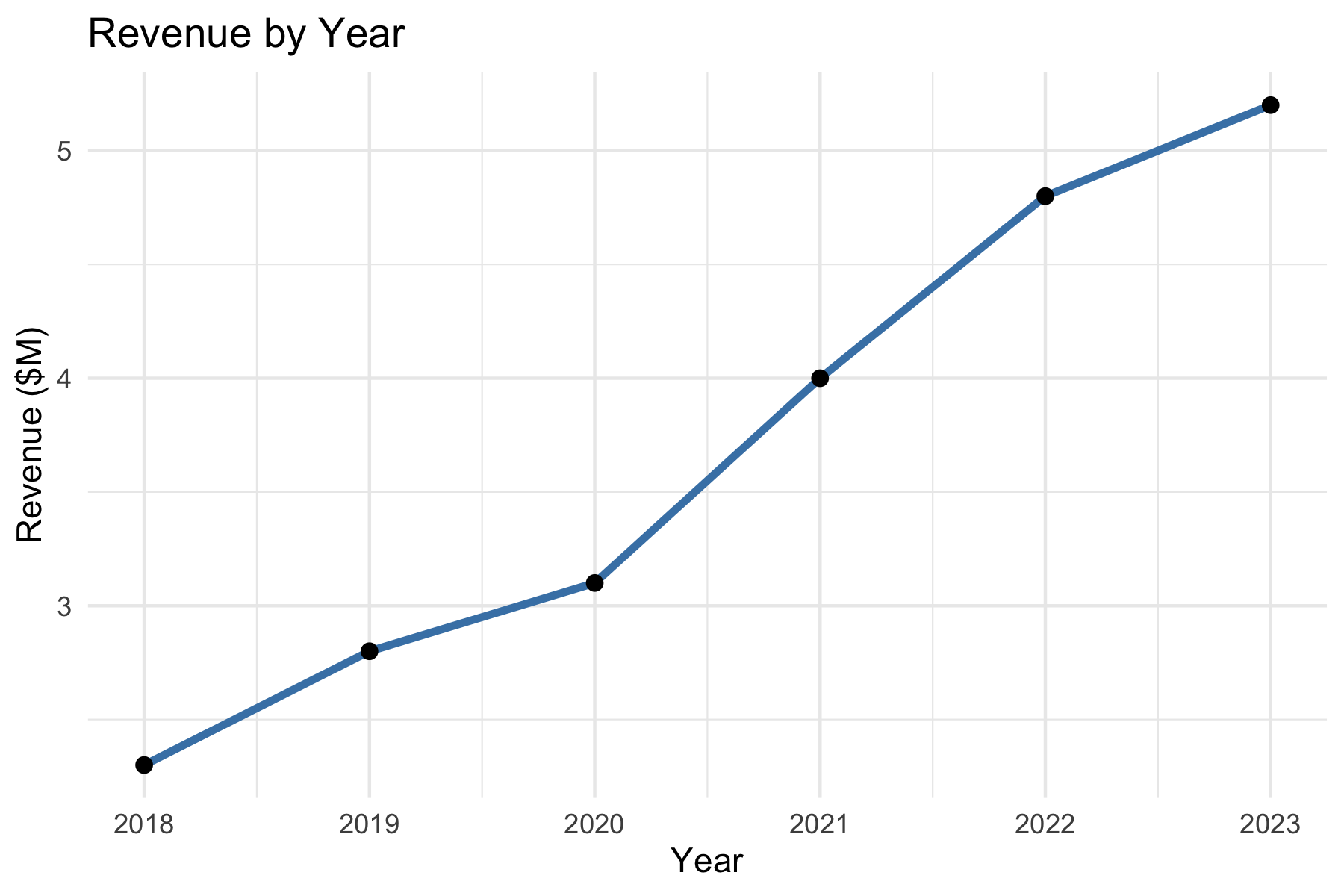

- Strong: “Write R code using ggplot2 to create a line plot of

revenuebyyearwith labeled axes and a descriptive title.”

- Give Context

Provide background information such as the data structure, libraries, or your end goal. This reduces ambiguity and helps the AI tailor its response.

Example:- “I have a pandas data frame with columns

cityandpopulation. Please write Python code using seaborn to create a bar chart of population by city.”

- “I have a pandas data frame with columns

- State Constraints

Specify limitations or requirements for the response, such as the format, length, or assumptions.

Example:- “Give me only the R code, no explanations.”

- “Limit the answer to a single ggplot2 figure.”

- “Assume the data frame has no missing values.”

- Iterate

Think of prompting as a conversation, not a one-shot request. Start simple, review the output, and refine with follow-up prompts.

Example:- First prompt: “Write Python code to read a CSV file and display the first five rows.”

- Follow-up: “Now extend this code to calculate the mean of all numeric columns.”

- Next follow-up: “Format the summary as a neat table.”

- Later, we will see how iteration moves from conversation to code, where you can reuse a prompt programmatically rather than by typing.

- Verify

Never assume the AI is correct. Check the output against your own knowledge, official documentation, or by running the code. Be alert for hallucinations (nonexistent functions, incorrect syntax, or misleading explanations).

Example:- If the AI suggests

robust_cor()in R, search the documentation. If it doesn’t exist, redirect:

“That function doesn’t exist. Could you instead use Spearman’s correlation or show me how to fit a robust regression withMASS::rlm()?”

- If the AI suggests

- Adjust for Creativity or Accuracy

You can control how wide-ranging or precise the response should be by adjusting your wording.

Example:- Creative: “Show three different ways in R to visualize a distribution.”

- Accurate: “Show the single most standard ggplot2 approach for plotting a histogram of a numeric variable.”

- Assign a Role

Guide the style of the response by telling the AI who it should act as.

Example:- “You are a data science tutor. Explain correlation to a beginner and include an R code example.”

- “You are a coding assistant. Provide concise Python code with no explanations.”

19.2.5.1 Example: Making a Good First Prompt

Weak prompt:

> Plot the data.

Improved prompt:

> I have a data frame in R with columns year and revenue. Please write R code using ggplot2 to create a line plot of revenue by year, with labeled axes and a title.

19.2.5.2 Example: Building a Conversation

First Prompt:

> Write Python code to read a CSV and summarize the first five rows.

LLM Output:

Code using pandas.read_csv() and df.head().

Follow-Up Prompt:

> Please extend your code to also compute the mean of all numeric columns and print the result.

LLM Output:

Adds df.mean(numeric_only=True).

Next Follow-Up:

> Could you format the summary as a table with column means below the head output?

The model refines until the solution fits your needs.

19.2.5.3 Example: Checking for Hallucinations

Prompt:

> Write R code to compute a robust correlation coefficient.

LLM Output:

Provides code with a function robust_cor() (a hallucination as the function does not exist).

Student Check: - Get’s an error message and looks up if robust_cor() exists in R.

- If not, ask:

> That function doesn’t seem to exist. Could you instead show how to use MASS::rlm() or another real package to compute robust correlation?

The key is to verify and redirect.

19.2.5.4 Example: Large vs. Small Model Responses

The same prompt can yield different results depending on the size of the model.

Larger models (billions of parameters) generally produce more detailed and accurate answers, while smaller ones may be faster but less reliable.

Prompt:

> In R, how do I compute the correlation between two variables when the data has outliers?

| Model | Response |

|---|---|

| Large model (e.g., GPT-4, Claude Opus, Llama-70B) | “One option is to use a robust correlation method. For example, you can use Spearman’s rank correlation in R: cor(x, y, method = "spearman"). Another approach is to fit a robust regression using MASS::rlm() if you want to downweight outliers. Both approaches reduce the influence of extreme values compared to Pearson correlation.” |

| Smaller model (e.g., GPT-3.5, Llama-7B) | “You can use cor(x, y) in R. This computes correlation between two vectors.” (Note: does not mention outliers or alternatives like Spearman or robust regression.) |

Takeaway:

- The large model recognizes the nuance (outliers) and suggests multiple valid approaches.

- The smaller model gives a quick but incomplete response.

- Lesson: Always check whether the model has considered your context.

19.2.5.5 Example: Specifying Roles

When prompting, you can assign a role to the AI which will adjust its response.

Prompt 1 (role: assistant):

> You are a coding assistant. Write R code to plot the distribution of a numeric variable.

Likely Response:

Straightforward R code using hist() or ggplot2::geom_histogram().

Prompt 2 (role: instructor):

> You are a statistics instructor. Explain to a beginner how to plot the distribution of a numeric variable in R, and include an example using ggplot2.

Likely Response:

A step-by-step explanation with annotated code — more teaching-oriented.

19.2.5.6 Example: Adjusting Creativity vs. Accuracy

LLMs can be “dialed” for creativity (diverse answers, new ideas) or accuracy (precise, more deterministic answers).

- This is often controlled by a setting called temperature (higher = more creative, lower = more predictable).

- Even without changing system settings, you can influence style through your prompt wording.

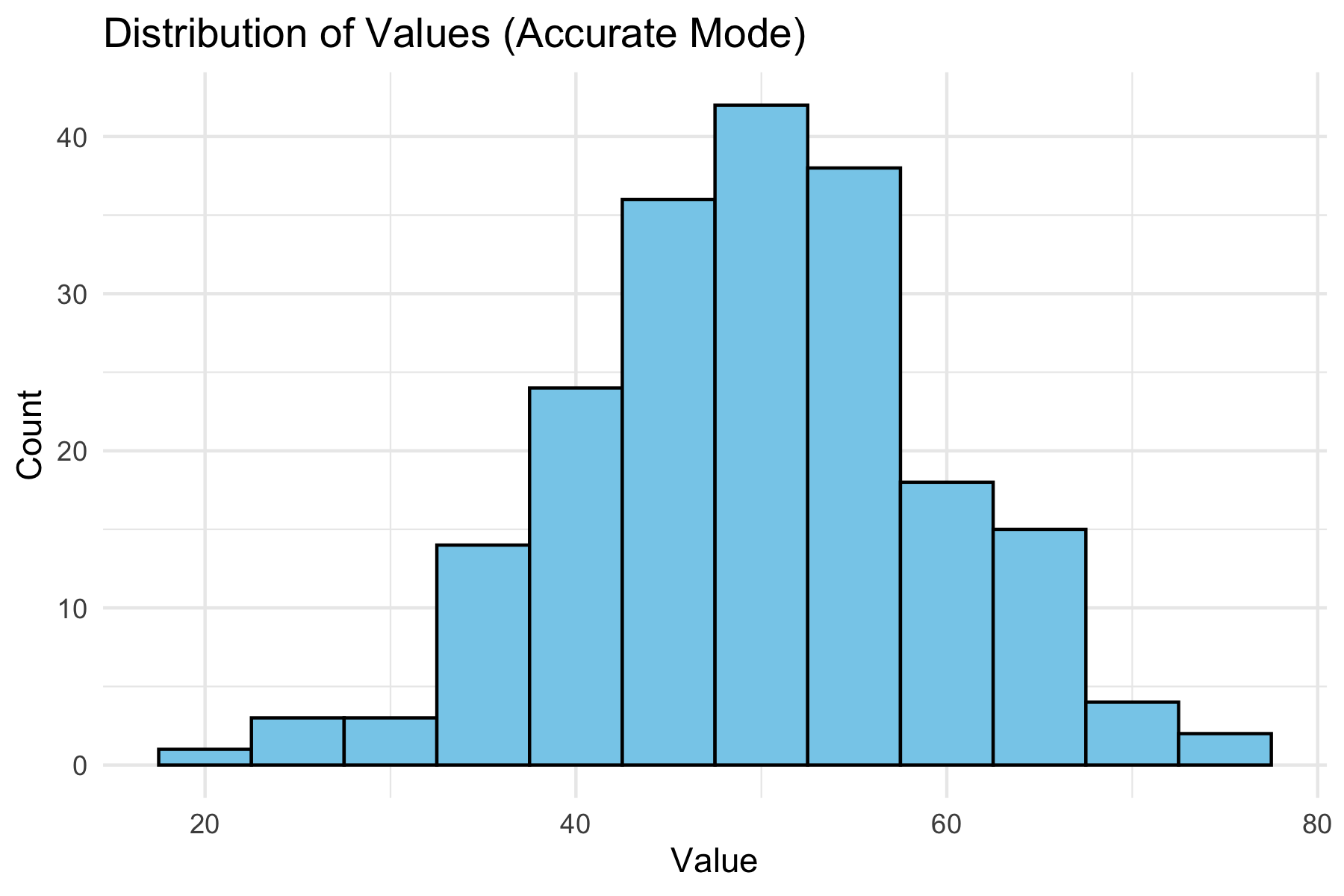

Prompt (creative mode):

> Be imaginative. Show me three different R approaches to visualize the distribution of a variable.

Possible Output:

- Histogram (geom_histogram())

- Density plot (geom_density())

- Boxplot (geom_boxplot())

Prompt (accuracy mode):

> Provide the single most standard way in R using ggplot2 to visualize a variable’s distribution.

Possible Output:

One clean example using geom_histogram(), without alternatives.

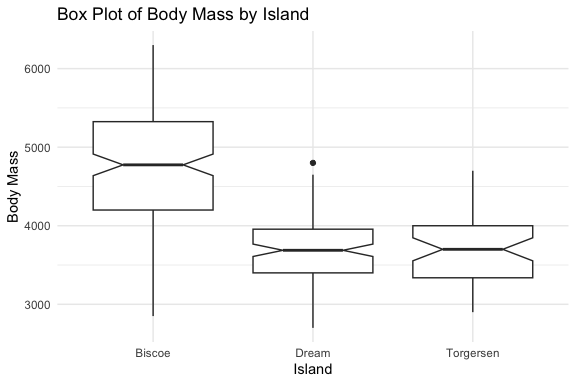

Below we show a “standard” accurate plot of a distribution: If the AI had been asked in creative mode, it might instead show a density plot, violin plot, or boxplot.

library(ggplot2)

set.seed(42)

values <- rnorm(200, mean = 50, sd = 10)

ggplot(data.frame(values), aes(x = values)) +

geom_histogram(binwidth = 5, fill = "skyblue", color = "black") +

labs(title = "Distribution of Values (Accurate Mode)",

x = "Value", y = "Count") +

theme_minimal()

You can shape the AI’s “persona”(assistant, instructor, critic, tutor) and also control breadth vs. precision of answers depending on their goals.

19.2.6 Summary

Here is a quick reference sheet you can use when working with AI tools:

| Strategy | What It Means | Example Prompt |

|---|---|---|

| Specify Role | Tell the AI who it should act as (assistant, instructor, critic, tutor). | “You are a statistics instructor. Explain correlation to a beginner with examples in R.” |

| Give Context | Provide background: dataset, libraries, goals. | “I have a data frame in R with columns year and revenue. Use ggplot2 to plot revenue by year.” |

| State Constraints | Limit length, format, or assumptions. | “Give me Python code only, no explanations, using pandas and seaborn.” |

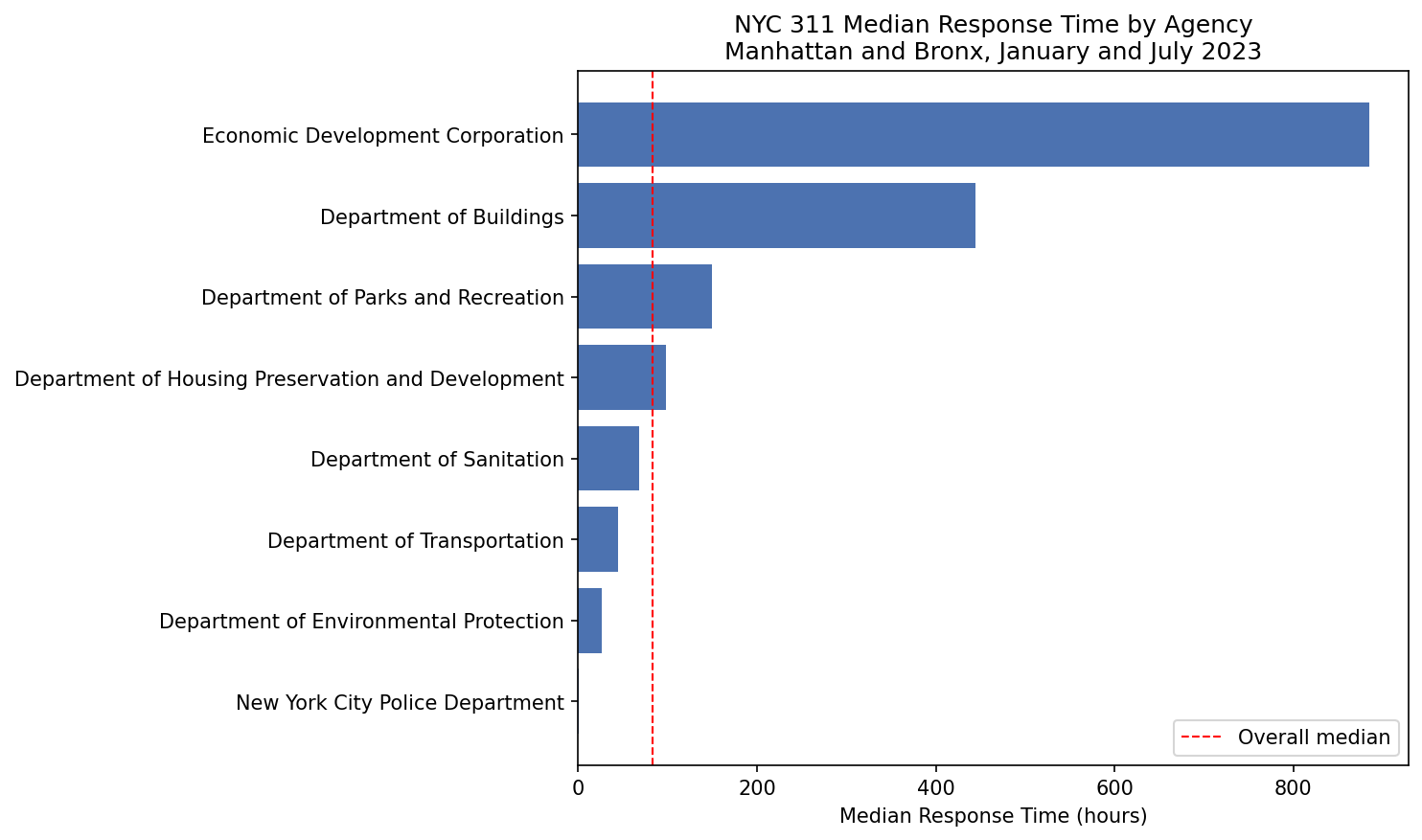

| Iterate | Use follow-up prompts to refine or extend. | “Now add labels to the axes.” |

| Verify | Check outputs against your knowledge or documentation. | “That function doesn’t exist in R. Show me an alternative from MASS or robustbase.” |

| Creativity vs. Accuracy | Ask for one best method (accuracy) or multiple diverse methods (creativity). | “Show three different ways in R to visualize a distribution.” |

| Check for Hallucination | Be skeptical if the AI invents code/functions. Redirect if necessary. | “I can’t find that function. Can you cite the package or suggest a real function?” |

Prompt engineering is not about tricking the AI, but about effective communication.

Think of the AI as a partner: - You provide structure, clarity, and verification. - It provides suggestions, alternatives, and explanations.

With practice, you’ll learn when to ask for creativity, when to demand precision, and how to iterate toward a reliable solution.

In the next section, we begin moving from interactive conversations to code driven interactions, turning prompts from typed messages into functions that can be called, tested, and reused.

19.3 From Interactive Prompts to Code

This short module is about transitioning from chat-based interactions to working with LLMs in a structured, code-driven manner as is required for agentic systems.

It focuses on strengthening thinking about prompts in three areas that will be useful for using agents:

- Structured prompt design

- Iteration as a loop

- Constraint prioritization

19.3.1 A Simple Prompt Template

The following template provides a consistent way to construct high-quality prompts.

A good prompt answers five questions:

- ROLE: Who should the AI act as?

- TASK: What exactly should it do?

- CONTEXT: What information does it need?

- CONSTRAINTS: What rules must it follow?

- OUTPUT FORMAT: What should the result look like?

Example:

- Role: You are a data science tutor.

- Task: Write R code to visualize revenue over time.

- Context: I have a data frame with columns

yearandrevenue. - Constraints:

- Use ggplot2

- Assume no missing values

- Include axis labels and a title

- Output: Code only with no explanation

The ROLE component deserves particular attention because it shapes the entire character of the response — and because in more complex systems, the same model may be called multiple times playing different roles within a single workflow.

- Role prompting reliably affects output style and format across models. Its effect on accuracy is less consistent and depends on the model and task.

- The most reliable use of roles in data science workflows is not to improve correctness, but to separate concerns by using different roles to shape what the model attends to and how it presents results.

Table 19.1 lists some common roles you can use to shape the LLM response style and structure in lieu of lengthy prose descriptions.

| Role | Primary Effect | Example use |

|---|---|---|

| Coding assistant | Terse, code-first, no explanation | Generating a function |

| Data science tutor | Explanatory, step-by-step, teaching tone | Learning a new method |

| Code reviewer | Critical framing, error-focused | Evaluating output quality |

| Planner | Breaks a task into ordered subtasks | Decomposing a complex goal |

| Evaluator / Judge | Assesses output against criteria | Checking constraint satisfaction |

| Summarizer | Condenses prior context | Compressing conversation history |

These role serve distinct purposes:

- The first three roles primarily change style and are broadly consistent across models.

- The last three, Planner, Evaluator, and Summarizer, are structural roles used to divide labor across separate model calls.

- These are where role assignment matters most in agentic systems:

- Asking the same model call to both generate and evaluate its own output in one prompt is less reliable than separating those roles into two calls.

A tightly structured prompt has the following benefits:

- Reduced ambiguity

- Improved consistency which supports reproducibility

- Easier to debug

- Provides a foundation for agentic systems

This structure mirrors how agentic systems internally organize tasks, making it easier to transition from prompting to automated workflows.

19.3.2 Iteration as a Loop

In chat-based interactions, iterating prompts can be thought of as a conversation.

However, when working with code-driven interactions, it is more useful to think of iteration as a structured loop.

The Iteration as a Structured Loop Pattern follows a common cycle:

- Ask

- Evaluate

- Refine

- Repeat

Example

- Ask: Write R code to plot revenue by year.

- Evaluate: Check for missing labels or no title

- Refine: Add axis labels and a descriptive title.

- Repeat: Continue refining until the output meets requirements

This loop is the foundation of more advanced systems:

- Interactive Prompting leads to a human-driven manual loop

- Workflows can automate a fixed version of this loop

- Agents can run it dynamically, deciding when to continue, stop, or change direction.

19.3.3 Constraint Hierarchy

Not all constraints are equally important. Focus on the critical few instead of the “messy many.”

Suggested: Prioritize Constraints in the following order and check constraints in this order as errors are often caused by missing or unclear high-priority constraints.

- Output Goal

- code vs explanation

- table vs paragraph

- Tools / Libraries

- ggplot2, pandas, etc.

- Assumptions

- missing values

- data types

- Scope

- one solution vs multiple options

- Style

- concise vs detailed

Example of weak versus strong:

- Weak constraint: Make it clear and nice.

- Strong constraint: Output only R code using ggplot2 with labeled axes and a title.

Example: Poor vs Structured Iteration

- Poor Iteration: User repeatedly adds vague follow-ups such as “Make it better”, “Fix it” or “Change it a bit”

- Results can be inconsistent outputs, drifting logic, confusion in tracking the logical flow.

- Improved Approach:

- Reset with structure:

- You are a coding assistant.

- Task: Create a ggplot line chart.

- Context: Data frame with year and revenue.

- Constraints: Use ggplot2, include labels and title

- Output: Code only

- Then iterate systematically using the loop.

- Reset with structure:

The key difference is not more prompts, but better structure in each prompt.

Constraints are not just input instructions, they also inform tests for whether the output is correct”.

Well-designed constraints make it easier to identify problems such as:

- missing required elements

- incorrect format

- use of the wrong tools or libraries

- logically inconsistent results

Example prompt with weak constraints: Plot revenue over time.

- This is difficult to evaluate because there are few clear criteria for correctness.

Example Prompt with strong constraints: Output R code using ggplot2 with labeled axes and a title.

- Now you can define simple tests:

- Did it use ggplot2?

- Are the axes labeled?

- Is there a title?

Good constraints inform evaluation or test criteria.

If you cannot easily test whether the output meets your constraints, the constraints are not specific enough, so revise them with additional details or guidance.

“If you don’t know where you are going, you’ll end up someplace else.” — Yogi Berra

Rule of Thumb: If you can’t evaluate it, you haven’t specified it clearly enough. A vague constraint gives the model nothing to aim at.

19.3.4 Transition to Agentic Systems

These ideas extend directly into working with agentic systems more effectively.

In prompt engineering, you structure instructions; in agentic systems, you structure processes over time.

The same components apply:

- clear tasks

- explicit constraints

- iterative refinement

The difference is agents can:

- use tools

- execute code and

- interact with external services

Prompt engineering is not just about wording; it is about building a repeatable structure for interacting with LLMs in agentic systems.

“In the next section, we take this structure a step further: instead of typing a prompt into a chat interface, we encapsulate it into a function which makes it callable, testable, and reusable as a building block for more complex systems.

19.4 Agentic Systems Overview

Agentic systems are a rapidly evolving area and terminology varies across platforms and providers.

- Terms like tools, functions, skills, and agents are broadly shared, but their precise meaning and implementation differ — For example, skills has a specific technical meaning in Anthropic’s Claude ecosystem that is more structured than casual usage implies.

- This section uses terms consistent with current practice and notes where platform-specific usage may differ from the general concept.

The goal of this section is not to settle every definition, but to give a clear and practical mental model for building and understanding LLM-based systems.

- You do not need to understand all of these in detail yet

- We will revisit these ideas and explore their complexities and tradeoffs as we work through examples and build systems in later sections.

We’ll start with defining augmented LLMS and agentic systems and then make a progression from Prompts to Agents.

Table 19.2 summarizes the core terms and concepts in the mental model for moving from writing prompts to building systems where agents decide what to do next.

| Stage | What It Is | Who Controls the Process |

|---|---|---|

| Prompt | Task + instructions | User |

| Function | Prompt wrapped in code | Code (you call it) |

| Workflow | Sequence of steps | Code (fixed control flow) |

| Agent | A goal-directed system | Model pursues goal using available tools and skills |

The kitchen analogy in Table 19.3 illustrates how these components relate to each other.

| Concept | Kitchen Analogy | Key Point |

|---|---|---|

| Prompt | An order ticket | States what is wanted |

| Function | A named recipe step | Repeatable, callable by name |

| Tool | A kitchen appliance | Does one thing when switched on |

| Skill | A recipe or technique | Encodes how to do a class of tasks |

| Workflow | A fixed meal service | Steps are predetermined by the chef to produce a pre-defined meal |

| Agent | The cook | Has a goal for a meal, selects tools and skills, decides what to do next |

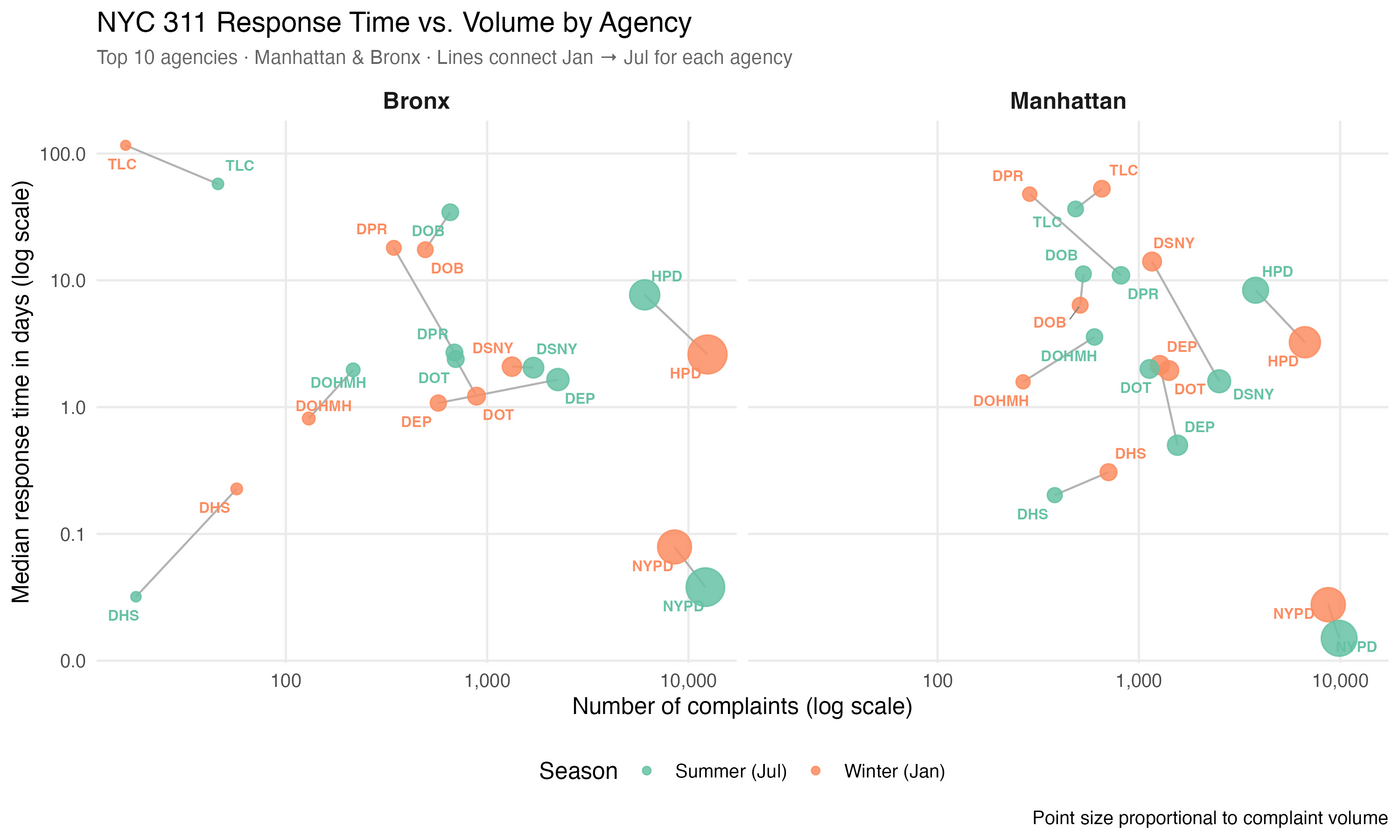

Figure 19.1 provides an alternate visual representation of the progression from prompts to functions and workflows, and then to agents.

- This progression is aligned with a shift from user-driven interactions to model-assisted processes.

- There are tradeoffs across the approaches so no single approach is the best for every problem.

- With appropriate effort, all approaches are capable of producing validated results.

flowchart LR %% MAIN FLOW (FORCED ORDER) A["Prompt<br>Task + Constraints"] --> B["Function<br>Prompt in code"] --> C["Workflow<br>Fixed sequence"] --> D["Agent<br>Dynamic decisions"] %% FORCE ORDER (IMPORTANT) %% A --- B --- C --- D %% Functions CAP["Functions & Tools<br>(prompts, code, APIs)"] B --> CAP CAP --> D %% CONTROL FLOW subgraph control ["Control Flow"] direction LR CF1["User-driven"] CF2["Code invoked"] CF3["Code defines process"] CF4["Model helps define process"] end A --> CF1 B --> CF2 C --> CF3 D --> CF4 %% RESULTS R1["Validated Results<br>Task Complete"] R2["Validated Results<br>Task Complete"] R3["Validated Results<br>Task Complete"] R4["Validated Results<br>Task Complete"] CF1 --> R1 CF2 --> R2 CF3 --> R3 CF4 --> R4

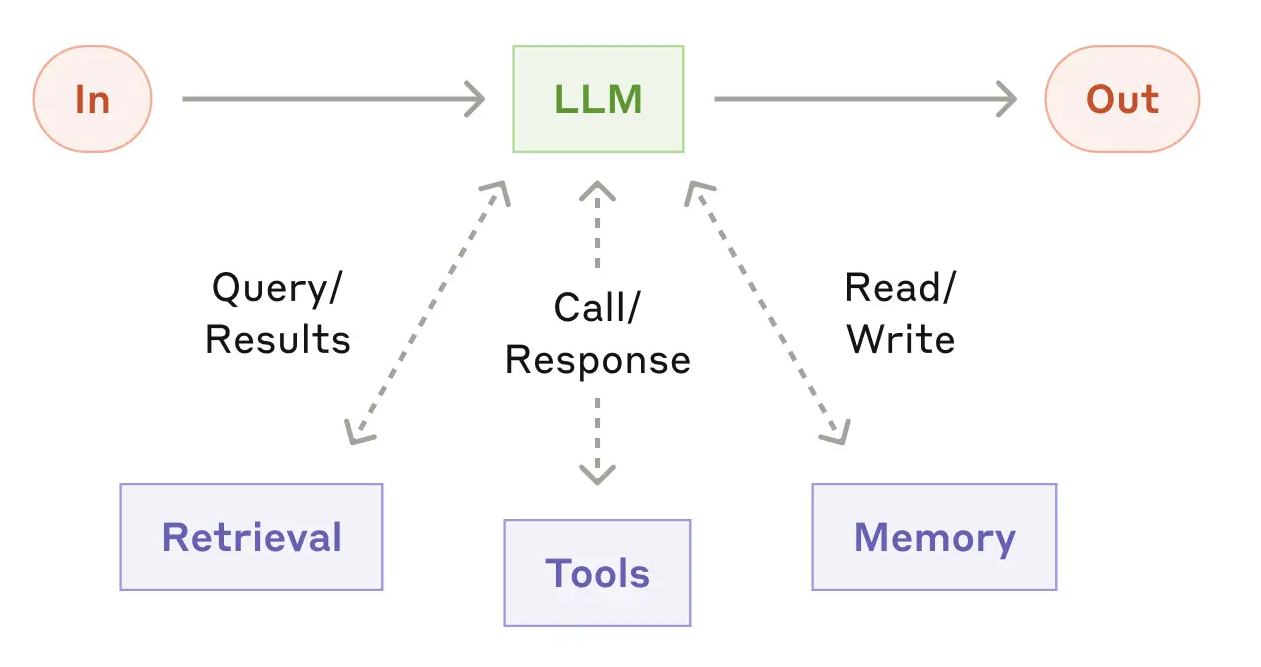

19.4.1 Augmented LLMs and Agentic Systems

A standalone LLM is powerful, but limited. On its own, it:

- responds to a single prompt

- does not take actions in an external environment

- does not retain or manage state across interactions

- does not evaluate or refine its own outputs

To move beyond these limitations, we work with an augmented LLM: a large language model embedded within a broader system.

As illustrated in Figure 19.2, the LLM is connected to:

- Tools: functions or APIs that allow it to take actions

- Memory: mechanisms for storing and retrieving information across steps

- Control logic: code that manages how the system operates

This allows the system to:

- generate follow-up queries

- retrieve additional information

- select tools or skills to perform analysis

- retain and reuse relevant information across steps

Building on this idea, an agentic system is an LLM embedded with tools, memory, and control logic, organized to accomplish tasks in a code-driven manner rather than through a sequence of independent chat interactions.

An LLM becomes truly useful for complex tasks when it is embedded in a system that allows it to act, remember, and iterate, not just respond.

This is the foundation for everything that follows: tools enable action, memory enables persistence, and evaluation enables iteration.

In agentic systems, prompts are no longer one-time interactions.

They become designed components the system can use repeatedly and reliably. The user’s questions shift from:

“What should I ask the LLM right now?”

to “What prompt should this system use every time it performs this task?”

This shift from conversational prompt to designed component is what the progression in Table 19.2 describes.

19.4.2 From Prompts to Functions

To work effectively with LLM APIs, especially when building workflows and agents, we need to move beyond individual prompts and instead convert prompts into functions that are repeatable and structured.

This means explicitly defining in code:

- the inputs

- the instructions and constraints

- the expected type of output

For example:

This makes our prompt now:

- repeatable

- reproducible

- testable

- easier to version (in Git) and refine

- easier to integrate into larger agentic systems

Conceptually, this is the bridge from experimentation to system design:

- Prompts enable experimentation

- Functions provide reusable, callable building blocks

- Workflows organize capabilities into structured processes

- Agents decide which capabilities to use

19.4.3 Workflows

A workflow organizes LLM calls and tools into a predefined sequence of steps.

- The process and control flow are explicitly written in code.

- The sequence of operations is fixed.

- The model fills in details, but does not control the overall structure.

A simple workflow built on the earlier function might look like this:

Here:

generate_code_summary()is a function that encapsulates a prompt to generate code to produce a summary ofmy_data.evaluate_code_summary()is function that checks whether the resulting code is acceptable- the loop defines the workflow

- the control logic is explicit in code

19.4.4 Evaluation

Any time we have a model generate code or an output we want to use, we need to evaluate it before using it in a larger system.

- The evaluation criteria come directly from the constraints you defined in the prompt.

- This is why well-specified constraints matter as much for testing as they do for generation.

The evaluate_code_summary() function performs three conceptual tasks that map to the constraint hierarchy introduced earlier:

- Check execution — does the code run without errors? (Output Goal constraint)

- Validate output structure — is the output the expected type and shape? (Tools / Assumptions constraints)

- Validate output semantics — does the result actually answer the question? (Scope / Output Goal constraints)

A simplified pseudo-code version might look like:

evaluate_code <- function(code, data_context) {

try:

output <- execute(code, environment = data_context)

execution_success <- TRUE

catch error:

execution_success <- FALSE

error_message <- error$message

if (!execution_success) {

return(list(

valid = FALSE,

feedback = paste("The code failed with error:", error_message)

))

}

if (!is_expected_type(output)) {

return(list(

valid = FALSE,

feedback = "The output is not in the expected format."

))

}

if (!passes_reasonableness_checks(output)) {

return(list(

valid = FALSE,

feedback = "The output does not appear to answer the question correctly."

))

}

return(list(

valid = TRUE,

output = output

))

}19.4.5 Agents

While workflows provide structure and reliability, they are limited to predefined sequences of steps.

An agent shifts some of that control to the model itself.

- The model decides which action to take

- The sequence of steps is not fully predetermined

- The system may choose among multiple tools or strategies

A concise working definition:

An agent is a system where the model decides what to do next, rather than following a fixed sequence of steps.

for (i in 1:max_steps) {

action <- call_model("

Given the task and current state,

choose the next action:

- generate_code_summary

- plot_data

- stop

")

if (action == "generate_code_summary") {

code <- generate_summary_code("nyc311_clean")

result <- evaluate_code_summary(code)

} else if (action == "plot_data") {

plot <- generate_code_plot("nyc311_clean")

result <- evaluate_code_plot(plot)

} else if (action == "stop") {

break

}

# model sees results and decides next step

}The distinction can be summarized as:

- Workflow: we define the process and control flow, and the model fills in the details

- Agent: we define the available functions and tools, and the model helps determine which to use and in what order.

Analogy: Workflows vs Agents and Programming Models

If you are familiar with R Shiny or Dash, it may be helpful to think about workflows and agents in terms of declarative versus reactive programming.

- A workflow is similar to declarative or pipeline-based programming:

- The sequence of steps is explicitly defined

- The control flow is fixed

- Each step executes in a predictable order

- An agent is closer in spirit to reactive systems:

- The next action depends on the current state

- The system adapts dynamically based on intermediate results

- The sequence of operations is not fully predetermined

Note: This is an analogy, not an exact equivalence.

- Reactive systems like Shiny are deterministic and event-driven, while agents rely on probabilistic model outputs to decide what to do next.

- The analogy is useful for intuition about fixed vs dynamic control, but the underlying mechanisms are different.

An Agent acts by calling functions and tools, the concrete building blocks you define in code and make available to the model.

These building blocks typically take three forms:

- Functions: functions you write that the model can invoke (e.g.,

generate_code_summary()) - Tools: connections to external services or execution environments (e.g., run code, query a database, call an API)

- Skills: reusable packages of instructions and code for recurring tasks.

- These are a more structured form of prompt-based function, used in some platforms including Claude Code.

- The concept is platform-portable even if the specific format varies.

The term capabilities appears frequently in general discussion of LLM systems and means roughly the same thing: what the system is able to do.

- We use the more specific terms here because they correspond directly to things you will write and call in R.

Agents are:

- more flexible

- better suited for open-ended tasks

- capable of adapting based on intermediate results

But they are also:

- harder to debug

- less predictable

- more sensitive to prompt and evaluation design

19.4.6 Choosing Between Workflows and Agents

Many tasks can be handled effectively without using agents.

Well-designed workflows are often:

- easier to implement

- more reliable

- simpler to debug

- sufficient for structured tasks

As with machine learning, increasing flexibility and capability comes with increased complexity and reduced predictability.

The goal is to match the level of system complexity to the problem.

- Use workflows when the task is structured and predictable

- Use agents when the task requires flexibility, adaptation, or decision-making

The concepts introduced here, prompts, functions, workflows, and agents, become easier to understand through working code than through description alone.

The next section introduces ollama, a tool for running LLMs locally on your own machine, and works through examples of each of these components to illustrate how they work and how they fit together in practice.

19.5 Building Workflows with Ollama

Section 19.2 introduced prompt engineering as an interactive practice of writing, refining, and iterating on prompts through a chat interface.

Section 19.3 disucssed developing a more structure approach for code-driven interactions with LLMs.

Section 19.4 introduced the concept of agentic systems as code-driven systems that use LLMs to generate and execute code, evaluate results, and make decisions about next steps.

It is time to move from discussion to practice.

- This section works through concrete examples of calling a model from R, encapsulating prompts as functions, and building workflows that generate, evaluate, and refine code.

To do that we need a way to call a model programmatically from R — and that is where Ollama comes in.

19.5.1 What is Ollama?

Ollama is an open-source tool that allows you to download and run large language models locally on your own machine.

Ollama provides:

- a model library with a growing collection of open-source LLMs that can be downloaded with a single terminal command

- a local server that runs as a background process and exposes a simple HTTP API for sending prompts and receiving responses

- a command-line interface for managing models, checking status, and testing prompts interactively in the terminal

When Ollama is running it behaves like a local version of a cloud API — your R code sends a prompt over HTTP to localhost and receives a response exactly as it would with a cloud provider, but without leaving your machine.

This makes Ollama a practical environment for exploring LLMs and building workflows for several reasons:

- No API key or account required: models run entirely on your local machine, so you can experiment immediately without setting up credentials or worrying about usage costs

- Full visibility into requests and responses: because you control the HTTP call directly, you can see exactly what is sent to the model and what comes back, which makes it easier to understand and debug the prompt-response cycle

- Multiple models available locally: you can pull and compare different models with a single terminal command, making it straightforward to observe how model size, training focus, and architecture affect output quality for the same prompt

- Fast iteration: local inference avoids network latency and rate limits, so the edit-run-evaluate loop is faster during development and the workflow concepts are not obscured by waiting for API responses

- Direct transfer to cloud APIs: the same prompt functions, evaluation logic, and workflow patterns work with cloud APIs such as Anthropic or OpenAI by swapping the calling function, e.g.,

call_ollama(), for a different model interface function; the rest of the system stays unchanged

19.5.2 Using Ollama from R

To install Ollama see the Install Ollama appendix (Appendix C) or go to <https://ollama.com>>.

Use the terminal to “pull” at least two models

llama3.2for lightweight general prompting -ollama pull llama3.2:1bqwen2.5-coder:3bfor coding tasks -ollama pull qwen2.5-coder:3b

We can now use these models to illustrate several concepts

- Use interactive prompts to compare outputs across models

- Encapsulate prompts into functions

- Build a simple workflow

- Create an agentic system

19.5.2.1 Starting and Verifying Ollama

Before using Ollama from R, ensure the local ollama service is running.

- On MacOS or Windows, launching the Ollama application will start the background service.

- You can also start it from the terminal:

You should get output similar to

[GIN] 2026/04/04 - 10:50:21 | 200 | 274.5µs | 127.0.0.1 | GET "/api/version"To check the installed models, use the terminal with

You should get output similar to the following but based on the models you installed.

AME ID SIZE MODIFIED

qwen2.5-coder:3b f72c60cabf62 1.9 GB 23 hours ago

qwen2.5-coder:7b dae161e27b0e 4.7 GB 23 hours ago

llama3.2:latest a80c4f17acd5 2.0 GB 14 months ago To check it is configured properly, test a model in the terminal.

Your terminal cursor should change to a blinking prompt and you can type a question to the model.

- For example: describe data science in one sentence?

- You may get a response similar to the following:

>>> describe data science in one sentence

Data science is the process of extracting insights, knowledge, and value from

data, using a combination of mathematical and computational techniques, such as

machine learning, statistics, and programming, to inform business decisions,

solve complex problems, or answer strategic questions.- To stop the current model use CTRL D in the terminal, or, if you are using the Ollama app, click the “Stop” button.

Ollama supports interactive chat in the terminal but we want to interact with Ollama through R, and we will see how to do that in the next section.

19.5.2.2 Calling Ollama from R

We access the model using a function call over HTTP using the {httr2} package.

- By default, Ollama runs on

http://localhost:11434.

Let’s define a function to call the model, with arguments for the prompt and the model of choice, and then parse the JSON response and return the response element.

- The function creates and sends a {httr2} request to the Ollama

/api/generateendpoint.

req_body_json()creates structured input for the model- model: the model to run

- prompt: the task or question

- stream: controls how results are returned

stream = FALSEmeans don;t stream the results; wait for the model to finish the full response before returning it so the return is one JSON object thatresp_body_json()can handle.

Now let’s use the function to send a prompt to the model.

[1] "A workflow refers to a series of steps or processes defined and executed for completing a specific task or goal."We can send the same prompt to different models and compare the results.

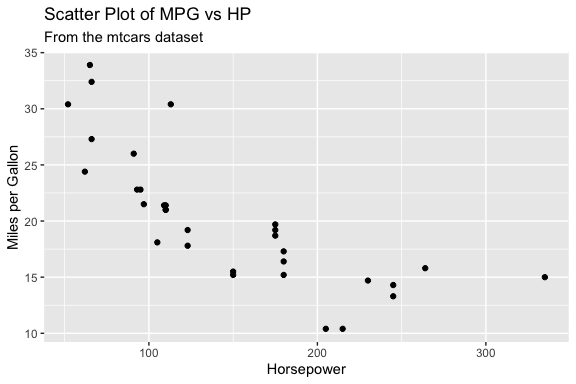

[1] "Here is a simple R code that uses ggplot2 to create a scatter plot of MPG vs HP from the built-in 'mtcars' dataset:\n\n```r\n# Load necessary libraries\nlibrary(ggplot2)\n\n# Create the scatter plot\nggplot(mtcars, aes(x = hp, y = mpg)) +\n geom_point() +\n labs(title = \"Scatter Plot of MPG vs HP\",\n subtitle = \"From the mtcars dataset\",\n x = \"Horsepower\",\n y = \"Miles per Gallon\")\n```\n\nIn this code:\n\n- We first load the ggplot2 library.\n- Then, we use `ggplot()` to create a new ggplot object, passing in the 'mtcars' dataset and specifying that 'hp' should be on the x-axis ('x') and 'mpg' should be on the y-axis ('y').\n- The `geom_point()` function is used to add points to the plot for each observation in the data.\n- Finally, we use the `labs()` function to set labels for the title, subtitle, x-axis, and y-axis of the plot."[1] "Certainly! Below is an example of how you can use the `ggplot2` package in R to plot `mpg` (miles per gallon) against `hp` (horsepower) from the `mtcars` dataset.\n\n```r\n# Load the ggplot2 package\nlibrary(ggplot2)\n\n# Create a scatter plot\nggplot(mtcars, aes(x = hp, y = mpg)) +\n geom_point() +\n labs(title = \"Miles per Gallon vs Horsepower\",\n x = \"Horsepower (hp)\",\n y = \"Miles per Gallon (mpg)\") +\n theme_minimal()\n```\n\nThis code will generate a scatter plot with `hp` on the x-axis and `mpg` on the y-axis. The `geom_point()` function is used to create the points, and `labs()` is used to add titles and labels to the axes and the plot. The `theme_minimal()` function sets a clean and minimalist theme for the plot."[1] "Sure! Below is an example of R code that uses `ggplot2` to create a scatter plot showing the relationship between miles per gallon (`mpg`) and horsepower (`hp`) from the `mtcars` dataset.\n\nFirst, make sure you have `ggplot2` installed. If not, you can install it using the following command:\n\n```R\ninstall.packages(\"ggplot2\")\n```\n\nThen, load the necessary libraries and create the plot:\n\n```R\n# Load the required library\nlibrary(ggplot2)\n\n# Create a scatter plot of mpg vs hp from mtcars\nggplot(mtcars, aes(x = hp, y = mpg)) +\n geom_point() +\n labs(title = \"MPG vs HP\",\n x = \"Horsepower (hp)\",\n y = \"Miles per Gallon (mpg)\") +\n theme_minimal()\n```\n\nThis code will generate a scatter plot with horsepower on the x-axis and miles per gallon on the y-axis. The `theme_minimal()` function is used to apply a clean, minimalistic theme to the plot.\n\nYou can customize the plot further by adding additional layers or modifying the appearance using various `ggplot2` functions."- Oops! the models return formatted text, not tidyverse style code we want.

This is what models do, they return text.

LLMs don’t return answers; they return text that must be interpreted.

The text in a response may include:

- explanatory text

- formatting characters such as

\nfor new lines. - markdown code fences

- comments

- installation commands we do not want to run

To compare models more clearly, it is helpful to extract just the code portion of the response.

19.5.3 Extracting Code from Model Responses

Let’s create a function to extract code from the response and name it extract_code().

We want the function to:

- Extract the code chunks

- Remove code fences and formatting characters

- Replace the escaped new line characters with actual new lines.

Now extract the code from the responses

code_llama <- extract_code(resp_llama)

code_qwen3b <- extract_code(resp_qwen3b)

code_qwen7b <- extract_code(resp_qwen7b)

code_llama[1] "# Load necessary libraries\nlibrary(ggplot2)\n\n# Create the scatter plot\nggplot(mtcars, aes(x = hp, y = mpg)) +\n geom_point() +\n labs(title = \"Scatter Plot of MPG vs HP\",\n subtitle = \"From the mtcars dataset\",\n x = \"Horsepower\",\n y = \"Miles per Gallon\")\n"[1] "# Load the ggplot2 package\nlibrary(ggplot2)\n\n# Create a scatter plot\nggplot(mtcars, aes(x = hp, y = mpg)) +\n geom_point() +\n labs(title = \"Miles per Gallon vs Horsepower\",\n x = \"Horsepower (hp)\",\n y = \"Miles per Gallon (mpg)\") +\n theme_minimal()\n"[1] "install.packages(\"ggplot2\")\n"We now have the code from each model as a string. We can use base R functions to view it and execute it.

cat()is useful to view the code with proper formatting and new lines.eval(parse())allows us to execute the code as R code.

Let’s compare all three.

llama code

# Load necessary libraries

library(ggplot2)

# Create the scatter plot

ggplot(mtcars, aes(x = hp, y = mpg)) +

geom_point() +

labs(title = "Scatter Plot of MPG vs HP",

subtitle = "From the mtcars dataset",

x = "Horsepower",

y = "Miles per Gallon")

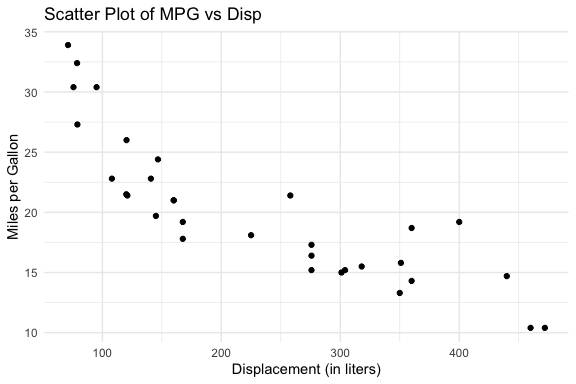

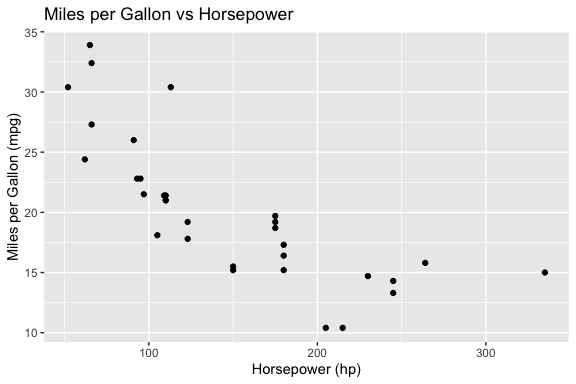

qwen3b code

# Load the ggplot2 package

library(ggplot2)

# Create a scatter plot

ggplot(mtcars, aes(x = hp, y = mpg)) +

geom_point() +

labs(title = "Miles per Gallon vs Horsepower",

x = "Horsepower (hp)",

y = "Miles per Gallon (mpg)") +

theme_minimal()

qwen7b code

install.packages("ggplot2")The following package(s) will be installed:

- ggplot2 [4.0.3]

These packages will be installed into "~/Courses/DATA-413-613/lectures_book/renv/library/macos/R-4.5/aarch64-apple-darwin20".

# Installing packages --------------------------------------------------------

[32m✔[0m ggplot2 4.0.3 [linked from cache]

Successfully installed 1 package in 4.9 milliseconds.Interpretation of results

- Did the models all return usable code?

- Which model produces the best code?

- Which is fastest?

- Which requires the least editing?

This illustrates a key idea: Model selection is part of system design.

When working with LLMs, you must be prepared for inconsistent response structure.

The extract_code() function works well when the model returns a single code block, however,

- Repeating the same prompt may produce responses with multiple code blocks

extract_code()only extracts the first block, which may not be the one you want to run

This highlights an important principle:

Workflows should be designed for the range of outputs a model may produce, not just a single observed response.

To handle this variability, we can extract all code blocks with str_extract_all() and then decide which one(s) to use.

This approach makes the workflow more robust by separating:

- extraction (get all candidate code)

- selection (decide what to run)

In more advanced systems, this selection step may itself become part of the evaluation process.

19.5.4 Encapsulating Prompts in Functions

Instead of repeatedly writing prompts, we now encapsulate them in a function that takes parameters and generates the appropriate prompt as in Listing 19.7.

- This is a thin wrapper function we will expand later.

- The default model is

qwen2.5-coder:3b, a model trained for code-generation, that balances output quality with response speed for local use.- The 7B version produces better results but is noticeably slower, which matters when rendering a document or iterating quickly.

- This is a common trade off in system design: a faster, smaller model is often the right default for development and experimentation, with a larger model reserved for final runs or tasks where quality is critical.

Now we can reuse our prompts, get the response, extract the code, and evaluate if the response included code.

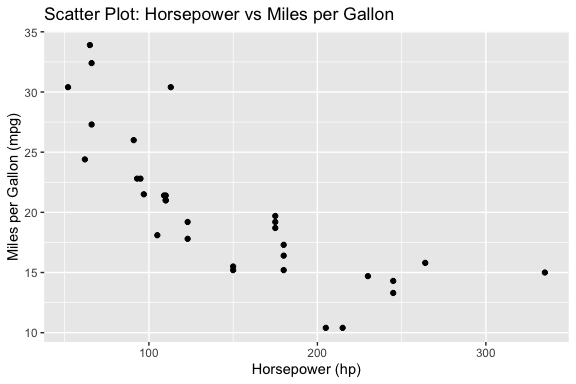

my_prompt <- "Write R code using ggplot2 to plot mpg vs hp from mtcars."

response <- get_model_response(prompt = my_prompt)

my_code <- extract_code(response)

if (!is.null(my_code)) {

eval(parse(text = my_code))

}

- Keeping the model response separate from the code extraction and execution at first allows for easier debugging and validation of the model output before running it.

Once you are satisfied the responses are consistent, you can wrap the two functions in a single function that generates the code and executes it.

This code may get a plot of mpg vs hp from the mtcars dataset or it may generate a prompt in the console to install {ggplot2} as the model wanted to ensure the packages were installed.

This is an example of a context-limited response: the model is trying to be helpful by ensuring the system has the necessary packages, but that is creating an interactive step which is not what we want in this case, especially if we want to render the file.

There are several option to handle this but the first step is to give the model more context and we do that by adding more constraints to our prompt.

Let’s refine the prompt to add a role and more constraints

my_prompt <- paste(

"You are a coding assistant working in R.",

"Write R code using ggplot2 to plot hp vs mpg from mtcars.",

"Assume all required packages are already installed.",

"Do NOT include install.packages().",

"Use library() only if needed.",

"Return exactly one fenced R code block.",

"Use at least one geom to plot the points."

)The role assignment, “You are a coding assistant working in R”, signals to the model that it should prioritize concise, executable code over explanation or installation instructions.

- This is the same role concept introduced in Table 19.1, now applied as a concrete line in a prompt.

However, adding this line manually to every prompt is error-prone and inconsistent.

- A better approach is to make the role a parameter or argument of the

get_model_response()function itself (Listing 19.7).- Choose a default for the role that is appropriate for many tasks.

- In this case, “You are a coding assistant working in R.” is a good role for many of our tasks.

- You may also get an error due to incomplete code in the response, e.g., missing a closing parenthesis.

To make the code more robust, the next step is to add error checking into the code.

- We can use

tryCatch()to handle cases where the model response is not as expected and generates an error condition.

Let’s create a safe_execute() function to wrap the code in a tryCatch() block that returns a list with success status and either the result or the error message.

Now update generate_and_run_plot() from Listing 19.8 to use safe_execute() to run the code.

- The function in Listing 19.10 returns a structured result that

- includes success status,

- the generated code (if successful),

- the result of execution with any error message.

generate_and_run_plot <- function(prompt, model = "qwen2.5-coder:3b") {

response <- get_model_response(prompt, model)

code <- extract_code(response)

if (is.null(code)) {

return(list(success = FALSE, error = "No code extracted"))

}

exec <- safe_execute(code)

# for interactive use, check if execution was successful and print error if not

if (!exec$success) {

cat("Execution failed:\n", exec$error, "\n")

}

return(list(

success = exec$success,

code = code,

result = if (!is.null(exec$result)) exec$result else NULL,

error = if (!is.null(exec$error)) exec$error else NULL

))

}Now when we run the function, we get a structured output that indicates whether the code execution was successful, the generated code if successful, and any error messages if it failed.

$success

[1] TRUE

$code

[1] "# Load the ggplot2 package\nlibrary(ggplot2)\n\n# Create a scatter plot of hp vs mpg from mtcars dataset\nggplot(mtcars, aes(x = hp, y = mpg)) +\n geom_point() +\n labs(title = \"Scatter Plot: Horsepower vs Miles per Gallon\",\n x = \"Horsepower (hp)\",\n y = \"Miles per Gallon (mpg)\")\n"

$result

$error

NULL

When running code generated by a model, errors are common and should be expected.

The tryCatch() function allows us to run code without stopping execution if an error occurs.

- Instead of crashing, the error is captured and returned as information the system can use.

The tryCatch() function wraps an expression and monitors its execution.

- It attempts to run the code inside the main block

- If the code completes normally, the result is returned

- If an error occurs, execution is interrupted, and control is passed to the error handler

In python, the (try / except) block serves a similar purpose, allowing you to handle exceptions gracefully without crashing the program.

try:

result = eval(code)

output = {"success": True, "result": result}

except Exception as e:

output = {"success": False, "error": str(e)}Conceptually, this creates two possible paths:

- Success path: code runs and returns a result

- Error path: the code fails, and the error handler captures the condition and returns structured information

In this sense, tryCatch() turns execution into a controlled branching process. As shown in Listing 19.9, instead of letting an error terminate execution, tryCatch():

- intercepts the error condition

- extracts relevant information (e.g., the message)

- returns it as part of a structured output

This enables a more robust workflow:

- The code can be attempted safely

- Errors can be inspected and reported

- The system can decide what to do next (e.g., revise the prompt or try again)

Using tryCatch() converts an code-stopping error into a structured output that can be used to inform the next steps in the workflow.

This pattern is critical in workflows and agentic systems because:

- it allows the system to continue running

- it makes errors observable and usable

- it enables decisions such as retrying, revising prompts, or selecting a different action

tryCatch() does not prevent errors, it makes them part of the system’s output that can be evaluated by humans or by code.

19.5.5 Workflows

Up to this point, we have focused on building individual components.

We now combine them into workflows, the fixed, code-controlled sequences described in Table 19.2.

19.5.5.1 Workflow 1: Generate -> Execute -> Evaluate (baseline loop)

This is Listing 19.9 converted into a workflow pattern.

- This is a baseline workflow where the control flow is fixed and defined entirely in code and we get results (plot or error) that can be evaluated.

run_once <- function(prompt, model = "qwen2.5-coder:3b") {

response <- get_model_response(prompt, model)

code <- extract_code(response)

if (is.null(code)) {

return(list(

success = FALSE,

code = NULL,

result = NULL,

error = "No code extracted"

))

}

exec <- safe_execute(code)

return(list(

success = exec$success,

code = code,

result = if (!is.null(exec$result)) exec$result else NULL,

error = if (!is.null(exec$error)) exec$error else NULL

))

}

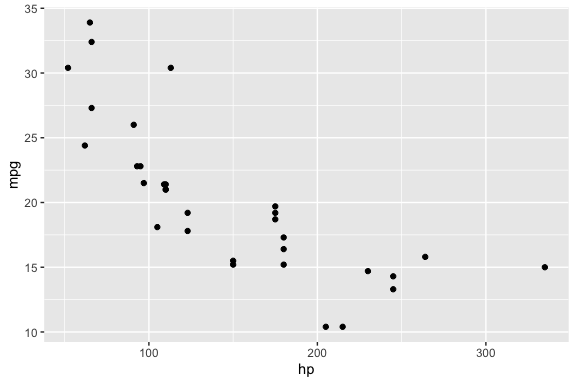

run_once(my_prompt)$success

[1] TRUE

$code

[1] "library(ggplot2)\n\nggplot(mtcars, aes(x = hp, y = mpg)) +\n geom_point()\n"

$result

$error

NULL- Note: we removed the interactive feedback for humans to keeps the functions clean for workflows and agents, but it also means we won’t see the error in the console if it occurs.

- If we want, we can add it back in the console to see the error if it occurs.

19.5.5.2 Workflow 2: Generate -> Evaluate -> Revise using an iterative loop

This workflow introduces feedback and iteration by combining functions that perform computation (e.g., model calls, code execution) with flow-control structures such as loops.

- The loop provides a natural way to track progress across attempts and observe how the workflow evolves over time.

- When designing workflows, remember that functions return results; workflows manage and monitor the process.

Listing 19.12 is a basic “Generate -> Evaluate -> Revise” loop that allows the system to attempt a task multiple times.

- If there is an error, the workflow refines the prompt by adding the task to fix the error (message) from the unsuccessful attempt.

run_with_retry()subsumesrun_once()since settingmax_attempts = 1is equivalent.

run_with_retry <- function(prompt, model = "qwen2.5-coder:3b",

max_attempts = 3) {

for (i in 1:max_attempts) {

cat("Attempt:", i, "\n")

response <- get_model_response(prompt, model)

code <- extract_code(response)

if (is.null(code)) {

next

}

exec <- safe_execute(code)

if (exec$success) {

return(list(success = TRUE, code = code))

}

# simple refinement strategy

prompt <- paste(

prompt,

"\nFix the error:",

exec$error

)

}

return(list(success = FALSE, error = "Max attempts reached"))

}- It’s good practice to avoid printing messages inside functions to keep them reusable and composable.

- However, it is appropriate to use

cat()messages as part of the flow control within the workflow to log progress.- In agentic systems, using logging to track the flow is important because the sequence of actions is not fixed.

- In more complex systems, logging data is often written to files rather than printed to the console, allowing results to be stored, analyzed, and reused across runs.

This simple workflow works, but exposes limitations that motivate a more structured approach for the workflow:

- the prompt keeps growing and becoming noisy as the same error message may get added over and over

- missing code is not handled well

- constraints may be lost over iterations

- evaluation is minimal (only execution success)

We can improve the workflow by making the evaluation and refinement steps more explicit.

19.5.5.3 A Refined Workflow 2: Generate -> Evaluate -> Revise

So far, evaluation has been minimal: the workflow only checks whether the generated code can be extracted and executed.

- In practice, that is often not enough.

A stronger evaluation strategy separates at least three kinds of checks in addition to Execution checks:

- Structure checks: ensure the code contains expected building blocks or elements.

- Task-specific checks: require you to know specific elements of the prompt, e.g., the data set or variables, to ensure the code appears to satisfy the specific analytical goal.

- Process Constraints: enforce workflow rules (e.g., disallow install.packages()).

For example, for a plotting task, these can be distinguished as follows:

- Structure checks: These ensure the code has the basic elements of a plot.

- does the code contain “ggplot(”?

- does it include at least one “geom_ layer”?

- Task-specific checks: These ensure the code is actually trying to solve the intended task, not just producing some plot.

- are the correct variables used (e.g., hp and mpg)?

- are they mapped to the correct axes?

- Process Constraints These enforce workflow constraints, not the task itself.

- does the code avoid “install.packages(”?

These try to ensure the code is actually solving the intended task, not just producing some plot.

Task-specific checks require some explicit representation of what the prompt is asking for.

- If the prompt remains fully flexible, then task-specific evaluation must also remain relatively general.

One way to support stronger evaluation later is to include two parts to the prompt:

- the prompt text used for generation which can be free text

- a structured set of expected elements to be used for validation e.g.,

expected = list(

dataset = "mtcars",

variables = c("hp", "mpg"),

required = c("ggplot", "geom_"),

forbidden = c("install.packages")

)This allows the workflow to remain flexible while still supporting more targeted evaluation based on structured data in the prompt.

In this example, the prompt is still a flexible argument so task-specific evaluation is limited.

- It is more practical now to focus on structure, constraint, and execution checks.

- More specific evaluation becomes possible when the system has explicit expectations about the task, such as keywords, variables, datasets, or required output elements.

Let’s encapsulate the structure and process checks in a function as in Listing 19.13.

evaluate_plot_code_structure <- function(code) {

checks <- list(

has_ggplot = stringr::str_detect(code, "ggplot\\("),

has_geom = stringr::str_detect(code, "geom_"),

installs_packages = stringr::str_detect(code, "install\\.packages\\s*\\(")

)

list(

success = checks$has_ggplot && checks$has_geom && !checks$installs_packages,

checks = checks

)

}We can use the evaluate_plot_code_structure() function to make the workflow more robust by no longer treating every executable result as a successful result for our task.

Listing 19.14 shows a refined workflow that makes the stages clear and uses a better evaluation and prompt refinement strategy.

- This version now separates two kinds of failure:

- evaluation failure: the code does not meet the expected structure or task constraints

- execution failure: the code looks reasonable but still does not run

run_with_retry <- function(prompt,

model = "qwen2.5-coder:3b",

max_attempts = 3) {

original_prompt <- prompt

last_error <- NULL

last_code <- NULL

last_checks <- NULL

for (i in 1:max_attempts) {

cat("Attempt:", i, "\n")

# Step 1: generate a response from the model

response <- get_model_response(prompt, model)

# Step 2: extract code from the response

code <- extract_code(response)

last_code <- code

# Step 3: handle missing code explicitly

# If no fenced R code block was extracted, we refine the prompt by asking for exactly one code block.

if (is.null(code)) {

last_error <- "No executable code block was extracted from the response."

prompt <- paste(

original_prompt,

"\nThe previous response did not contain a usable R code block.",

"Return exactly one fenced R code block.",

"Do not include explanation."

)

next

}

# Step 4: evaluate the code before execution

# Evaluate structure/process constraints: 'ggplot()', 'geom_', and no 'install.packages()'.

eval_result <- evaluate_plot_code_structure(code)

# Preserve checks from last evaluation attempt for the failure return at the end of the function

last_checks <- eval_result$checks

# Step 5: if structure/process checks fail, refine the prompt accordingly

# If any structure/process conditions are violated, we build a message explaining what was wrong

# and use it to refine the prompt. This helps the model correct the specific problem.

if (!eval_result$success) {

refinement_messages <- c()

# 'ggplot()' missing: structure problem.

if (!eval_result$checks$has_ggplot) {

refinement_messages <- c(

refinement_messages,

"The previous code did not include ggplot()."

)

}

# No geom_ layer: structure problem.

if (!eval_result$checks$has_geom) {

refinement_messages <- c(

refinement_messages,

"The previous code did not include a geom_ layer such as geom_point()."

)

}

# install.packages() found: process constraint problem.

if (eval_result$checks$installs_packages) {

refinement_messages <- c(

refinement_messages,