Laws, Guidelines, and Ethics

Considerations for Responsible Data Science

American University

1/22/23

Learning Outcomes

By the end of this discussion, you should be able to:

- Differentiate Legal, Professional, and Ethical Considerations

- Identify Sources of Ethical Issues in Data Science

- Apply Frameworks and Recommended Practices for Ethical Reasoning

- Philosophical Frameworks

- Professional Codes of Conduct

- Technical Guidelines

- Analyze data science work from legal, professional, and ethical perspectives

Agenda

- Legal, Professional, and Ethical Considerations

- Ethical Frameworks

- Recognizing Ethical Issues

- What Can You Do? What Should You Do?

- References

CAVEAT: This presentation provides general background to facilitate educational discussion.

It does not offer any form of legal advice or recommendations.

I. Legal, Professional, and Ethical Considerations

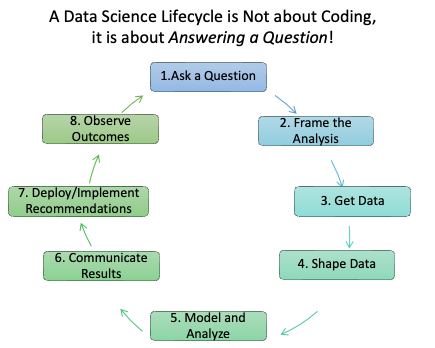

Data Science Has a Life Cycle

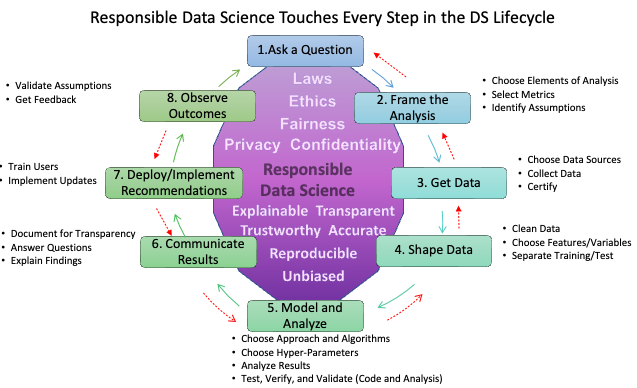

Responsible Data Science Asks Questions Across the Life Cycle.

Data Scientists Make Choices When Answering Ethical Questions

- What kinds of projects do I work on?

- What questions do I analyze?

- Who are the Stakeholders?

- Towards what goals do I optimize my models?

- How do I get my data? How do I protect my data?

- Is my data representative of the population?

- What do I do about “bad” or missing data?

- What to do with outliers that “mess up” my results?

- What variables/features/attributes do I use?

- How much effort do I put into checking my results? Are they repeatable?

- How do I leverage/credit other people’s work?

- How do I report my results - what is intellectual property and what should be public?

- …

- Choices can involve Legal, Professional, or Ethical Considerations

Choices have consequences, with benefits and risks.

Legal Considerations

- Legal considerations address the criminal/civil risks for violating laws.

Laws (statutes) specify permissible and/or impermissible activities as well as potential punishments- (judicial, e.g., imprisonment)

Policies (regulations) provide guidance on implementing activities (within legal constraints) along with adjudication procedures and potential punishments - (non-judicial, e.g., debarment)

Legal issues in big data include how you gather, protect, and share data, and increasingly, how you use it.

Laws are “local” not universal: Pirates or Privateers

Laws do not keep pace with technology and can be difficult to interpret.

When in doubt, ask a lawyer competent in the issue.

Laws Proscribe Some Choices

- Family Educational Rights and Privacy Act (FERPA) (US DOEd 2017)

- Fair Credit Reporting Act (FTC 2013b)

- Fair Housing Act (DHUD 2021)

- Equal Employment Opportunity Act (EEOC 2021b)

- Childrens Online Privacy Protect Act (COPPA) and Rule (FTC 2013a)

- Genetic Information Nondiscrimination Act (GINA) (EEOC 2021a)

- European General Data Protection Regulation (GDPR) (Wikipedia 2022)

- See What is GDPR (Burgess 2020)

- California Consumer Protection Act (Edelman 2020)

- And many more … with the expectation of more to come …

- Consider recent Congressional interest in Section 230 of the Communications Decency Act of 1996.

Professional Considerations

- Professional considerations address the risk of activities related to organizations with which you affiliate.

- Professional Organizations

- Employer Organizations

- Organizational bylaws or policies identify and manage risk to the institution.

- These may include professional “Codes of Conduct” or “Codes of Ethics”.

- American Statistical Association (ASA 2021)

- Association of Computing Machinery (ACM 2021)

- INFORMS Ethical Guidelines (INFORMS 2021)

- Data Science Association (Association 2021)

- AMA Journal of Ethics addresses big data in health care (AMA 2021)

- Organizational behavior and ethics may conflict with individual ethics

- Choices can include trying to change the organization or offending individuals; or leaving or being forced to leave the organization, with potential legal issues as well.

When in doubt, ask a mentor or manager you trust.

Ethical Considerations

Ethical considerations arise when asking

“What should I do?”

“What is Right”?

Ethical Choices can be hard, especially when choices may require violating a law, regulation, or professional guideline.

Individual principles and cultural norms shape options and guide choices in complex situations.

Often, there is no universally-accepted or even a good “right answer”.

May have to choose between two bad outcomes as the The Trolley Problem has many analogs. (Merriam-Webster 2021)

May have to choose between individual and group outcomes.

Ethical choices can lead to feelings of guilt, group reprobation, civil action (torts), or criminal charges.

Many, many, frameworks attempt to guide Ethical Choices.

II. Ethical Frameworks

Three (of many) Ethical Frameworks 1

- Consequentialist or Utilitarianism: greatest balance of good over harm (groups/individual)

- Choose the future outcomes that produce the most good

- Compromise is expected as the end justifies the means.

- Duty: Do your Duty, Respect Rights, Be Fair, Follow Divine Guidance:

- Do what is “right” regardless of the consequences or emotions

- Everyone has the same duties and ethical obligations at all times

- Virtues: Live a Virtuous Life by developing the proper character traits

- Ethical behavior is whatever a virtuous person would do

- Tends to reinforce current cultural norms as the standard of ethical behavior

Frameworks can conflict with each other or are “wrong” in the extreme.

No single or simple right answer!

Using Frameworks in Ethical Decisions

- Recognize There May Be an Ethical Issue

- Assess the underlying definitions, facts, and assumptions and constraints

- Consider the Parties Involved

- What individuals or groups might be harmed or benefit, and by when.

- Gather all Relevant Information

- Are you missing key facts? Are they knowable?

- Formulate Actions and Evaluate under Alternative Frameworks

What will produce the most good and do the least harm? (Utilitarian)

What respects the rights of everyone affected by the decision? (Rights)

What treats people equally or proportionately? (Justice)

What serves the entire community, not just some members? (Common Good)

What leads me to act as the sort of person I want to be? (Virtues)

- Examine Alternatives and Make a Decision

- Act and Observe

- Assess and Reflect on the Outcomes

III. Recognizing Ethical Issues

Our Biased Brains Helped Us Survive

- Our brains have evolved mechanisms to make quick decisions.

These are the source of “Unconscious Biases” or “Implicit Biases”.

- Unconscious stereotypes, prejudices, or attitudes that affect our decision-making, perception, and social behavior (“Implicit Bias Vs Unconscious Bias: Types Ways to Prevent Them” 2022).

- Friend or Threat, Familiar or Strange, Us versus Them

- Learn more and test yourself at Project Implicit (Implicit 2022).

Under stress, we tend to bypass the higher-level cognitive centers that evolved later and take more time to reason.

Humans get comfortable with patterns which can lead to systematic deviations from making rational judgments.

Ethical Challenges can arise from our own implicit biases or the implicit biases of others affecting our data, thoughts, and actions.

Short-term choices under stress may be bad in the long run ….

Bias in Data and Algorithms

Not a new issue - goes back decades.

However, the explosion growth of AI/Machine Learning systems to support and even implement decisions is generating concerns in multiple fields.

Are algorithms really less biased than people?

How can you tell with “black box” models?

What are the trade offs among accuracy, explainability, and transparency?

Bias in the (training) data (historical, sampling, …) can drive biased outcomes.

Algorithms find “hidden” relationships among proxy variables that can distort the interpretations.

When is it ethical to use Machine Learning or other Big Data systems?

Active area for research and publication. Two examples:

- MIT AI Blindspot Cards (MIT-Media-Lab 2019)

- Understand, Manage, and Prevent Algorithmic Bias (Baer 2019)

- University of Chicago’s Algorithmic Bias Playbook(UChicago, n.d.)

Three Articles for Discussion

Higher error rates in classifying the gender of darker-skinned women than for lighter-skinned men (O’Brien 2019)

Big Data used to generate unregulated e-scores in lieu of FICO scores for Credit in Lending (Bracey and Moeller 2018)

- Discussion Questions

- Is there an ethical issue or more than one? What is it?

- Who is affected and who is responsible?

- What would you do differently or recommend?

More Examples of Ethical Issues

Contradictions and competition among legal, professional, and ethical guidelines

Using biased data (even unknowingly) or eliminating extreme values or small groups

Using Proxies for “Protected” Attributes (even unknowingly)

Protection of Intellectual Property versus Explainability, Transparency and Accountability

Law of Unintended Consequences - people will use your products and solutions in “creative” ways that

- May not align with your principles, or,

- Be technically appropriate.

So what can you do? What should you do?

IV. What Can You Do? What Should You Do?

Consider Ethical Choices Across a Data Science Life Cycle

Ask a question: Is question about equity or equality? Who are the stakeholders? What are the trade offs across groups? What are our interests? Recency Bias? Confirmation Bias?

Frame the Analysis: What is the population? Are we using proxy variables? How are metrics for “fairness” affecting groups or individuals? Do we need IRB Review(APA 2022)?

Get Data: How was it collected? Was consent required/given for this use? Is there balanced representation of the population? Selection Bias? Availability Bias? Survivorship Bias?

Shape Data: Are we aggregating distinct groups? How do we treat missing data? Are we separating training and testing data?

Model and Analyze: Are we using proxies or over-fitting? How do we treat extreme values? Are we examining assumptions? Are we checking multiple performance metrics? Is our work reproducible?

Communicate Results: Are the graphs misleading? Do we cherry pick or data snoop? Are we reporting \(p\)-values and hyper-parameters?

Deploy/Implement: Is the deployment accessible to all?

Observe Outcomes: How can we validate assumptions and analyze outcomes for bias?

Consider Frameworks for Responsible Data Science

- Guidelines for the UK Government

- The US Department of Defense

- The 5 C’s Framework

- Google AI Principles

- IBM Principles for Trust and Transparency

Published by the Royal Statistical Society and the Institute and Faculty of Actuaries in A Guide for Ethical Data Science

- Start with clear user need and public benefit

- Be aware of relevant legislation and codes of practice

- Use data that is proportionate to the user need

- Understand the limitations of the data

- Ensure robust practices and work within your skillset

- Make your work transparent and be accountable

- Embed data use responsibly

DoD AI Capabilities shall be:

Responsible. … exercise appropriate levels of judgment and care, while remaining responsible for the development, deployment, and use….

Equitable. … take deliberate steps to minimize unintended bias in AI capabilities.

Traceable. … develop and deploy AI capabilities such that relevant personnel have an appropriate understanding …, including with transparent and auditable methodologies, data sources, and design procedures and documentation.

Reliable. … AI capabilities will have explicit, well-defined uses, and the safety, security, and effectiveness … will be subject to testing and assurance within those defined uses ….

Governable. … design and engineer AI capabilities to fulfill their intended functions while possessing the ability to detect and avoid unintended consequences, and the ability to disengage or deactivate deployed systems that demonstrate unintended behavior.

(DoD 2020)

A guide to Building “Trustworthy” Data Products

Based on the golden rule: Treat others’ data as you would have them treat your data

Consent - Get permission from the owners or subjects of the data before …

Clarity - Ensure permission is based on a clear understanding of the extent of your intended usage

Consistency - Build trust by ensuring third parties adhere to your standards/agreements

Control (and Transparency) - Respond to data subject requests for access/modification/deletion, e.g., the right to be forgotten

Consequences (and Harm) - Consider how your usage may affect others in society and potential unintended applications.

- Be socially beneficial.

- Avoid creating or reinforcing unfair bias.

- Be built and tested for safety.

- Be accountable to people.

- Incorporate privacy design principles.

- Uphold high standards of scientific excellence.

- Be made available for uses that accord with these principles.

We will not design or deploy AI in the following application areas: Weapons, Surveillance, …

- The purpose of AI is to augment human intelligence. The purpose of AI and cognitive systems developed and applied by IBM is to augment – not replace – human intelligence.

- Data and insights belong to their creator.

- IBM clients’ data is their data, and their insights are their insights. Client data and the insights produced on IBM’s cloud or from IBM’s AI are owned by IBM’s clients. We believe that government data policies should be fair and equitable and prioritize openness.

- New technology, including AI systems, must be transparent and explainable.

- For the public to trust AI, it must be transparent. Technology companies must be clear about who trains their AI systems, what data was used in that training and, most importantly, what went into their algorithm’s recommendations. If we are to use AI to help make important decisions, it must be explainable.

(IBM 2019)

To Be an Ethically Responsible Data Scientist …

Integrate ethical decision making into your analysis life cycle.

- Recognize legal, professional, and ethical issues surround your work

- See Weapons of Math Destruction (O’Neil 2017)

- Ask Questions of Peers, Managers, Mentors, and Experts to get guidance.

- Document your sources and approach - data, code, and references.

- Use Literate Programming. Quarto(Posit 2022)

- Protect your data: especially Personally Identifiable Information (PII) or HIPAA data.

- Consider Differential Privacy a la the Census Bureau.(ETI 2022)

- Create reproducible analysis and solicit appropriate reviews and feedback.

- Avoid “Spurious Correlations”. (Vigen 2021)

- Produce Results that Reveal, Don’t Conceal. (Weissgerber Tracey L. 2019)

- Don’t create Examples of Misleading Statistics.(Calzon 2021)

- Do consider Methods for Better Graphs. (DatatoViz 2021)

- Check Publishing and Conference Guidelines e.g., AutoML’s. (AutoML 2022)

- Consider your own biases. (Acton 2022)

To paraphrase American Frontiersman Davy Crockett,

“Try to be sure you are right, then Go Ahead!”

Stay Current on Emerging Ideas

- Stanford ’s Human-Centered AI Index for 2022 Report(HCAI 2022)

- Review articles on Big Data/Data Science/AI Ethics, e.g.,

- Review the Inventory of Awesome AI Guidelines (IEAI-ML 2021)

- Investigate Academic Centers

- Consider popular press articles

- Analyze Case Studies, e.g., Princeton University’s Case Studies (University 2018)

Summary for Today

- Discussed Legal, Professional, and Ethical Considerations

- Identified sources of ethical issues across the Data Science Life Cycle

- Examined frameworks and best practices for responsible Data Science

- Philosophical Frameworks

- Professional Codes of Conduct

- Making Choices to mitigate biased or harmful outcomes.

Going forward, analyze data science work from legal, professional, and ethical perspectives and examine the choices you and others make while learning more about responsible data science.

“We must address, individually and collectively, moral and ethical issues raised by cutting-edge research in artificial intelligence and biotechnology, which will enable significant life extension, designer babies, and memory extraction.” - Klaus Schwab

“Action indeed is the sole medium of expression for ethics.” - Jane Adams

V. References